Thanks For Rating

Reminder successfully set, select a city.

- Nashik Times

- Aurangabad Times

- Badlapur Times

You can change your city from here. We serve personalized stories based on the selected city

- Edit Profile

- Briefs Movies TV Web Series Lifestyle Trending Medithon Visual Stories Music Events Videos Theatre Photos Gaming

Farah Khan reveals she earned more than Shah Rukh Khan on Kabhi Haan Kabhi Naa : ‘He was paid Rs 25,000; I was paid per song’

Ranbir Kapoor’s 'Ramayana' co-star Indira Krishna shares a BTS picture from the sets; Thanks him for ‘love and care’

Jason Shah opens up about his experience on the 'Heeramandi' set; 'Lacked the simple niceties of human nature'

With a net worth of approx Rs 742 crore, Akshay Kumar is the ‘Khiladi’ in both Bollywood and business

Exclusive: Sonakshi Sinha-Zaheer Iqbal Wedding! Dress code for the June 23 event revealed

BTS' agency urges fans to refrain from visiting Jin’s discharge site, citing safety concerns

- Movie Reviews

Movie Listings

Bajrang Aur Ali

Return Ticket

Chhota Bheem And The C...

Mr. & Mrs. Mahi

Barah x Barah

Amala Paul's stunning saree looks

Breathtaking pictures of Isha Talwar

Pictures that redefine the eternal beauty of Tanya Hope

Elevate your wardrobe: Style lessons from Nyla Usha

Apurva Gore’s exquisite saree collection draws attention

Vibrant pictures of Nayanthara

Gorgeous pics of Komal Thacker

Bhojpuri actors who also worked in several industry

How to make 4 ingredient South-Indian Appam for breakfast

Aditi Rao Hydari exudes Bibbojaan vibes in an ethereal molten gold ensemble

Dedh Bigha Zameen

Chhota Bheem And The Cu...

House Of Lies

Bad Boys: Ride Or Die

Jim Henson: Idea Man

Fast Charlie

The Strangers: Chapter ...

The Beach Boys

Furiosa: A Mad Max Saga

Thelma The Unicorn

Bujji At Anupatti

Pagalariyaan

Konjam Pesinaal Yenna

Bhaje Vaayu Vegam

Gam Gam Ganesha

Gangs Of Godavari

Aa Okkati Adakku

Prasanna Vadanam

Avatara Purusha 2

Chow Chow Bath

Hide And Seek

Somu Sound Engineer

Nayan Rahasya

Bonbibi: Widows Of The ...

Pariah Volume 1: Every ...

Shinda Shinda No Papa

Sarabha: Cry For Freedo...

Zindagi Zindabaad

Maujaan Hi Maujaan

Chidiyan Da Chamba

White Punjab

Any How Mitti Pao

Gaddi Jaandi Ae Chalaan...

Buhe Bariyan

Swargandharva Sudhir Ph...

Naach Ga Ghuma

Juna Furniture

Alibaba Aani Chalishita...

Aata Vel Zaali

Shivrayancha Chhava

Devra Pe Manva Dole

Dil Ta Pagal Hola

Ittaa Kittaa

Jaishree Krishh

Bushirt T-shirt

Shubh Yatra

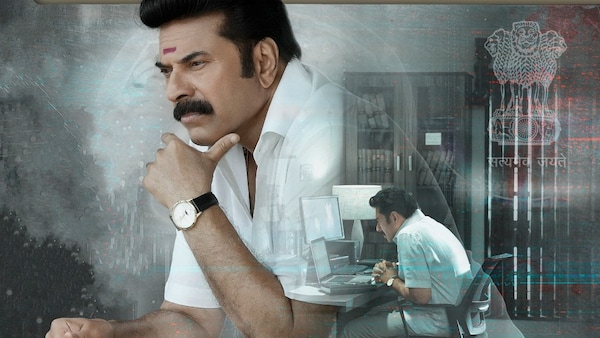

- CBI 5: The Brain

Your Rating

Write a review (optional).

- Movie Reviews /

- Malayalam /

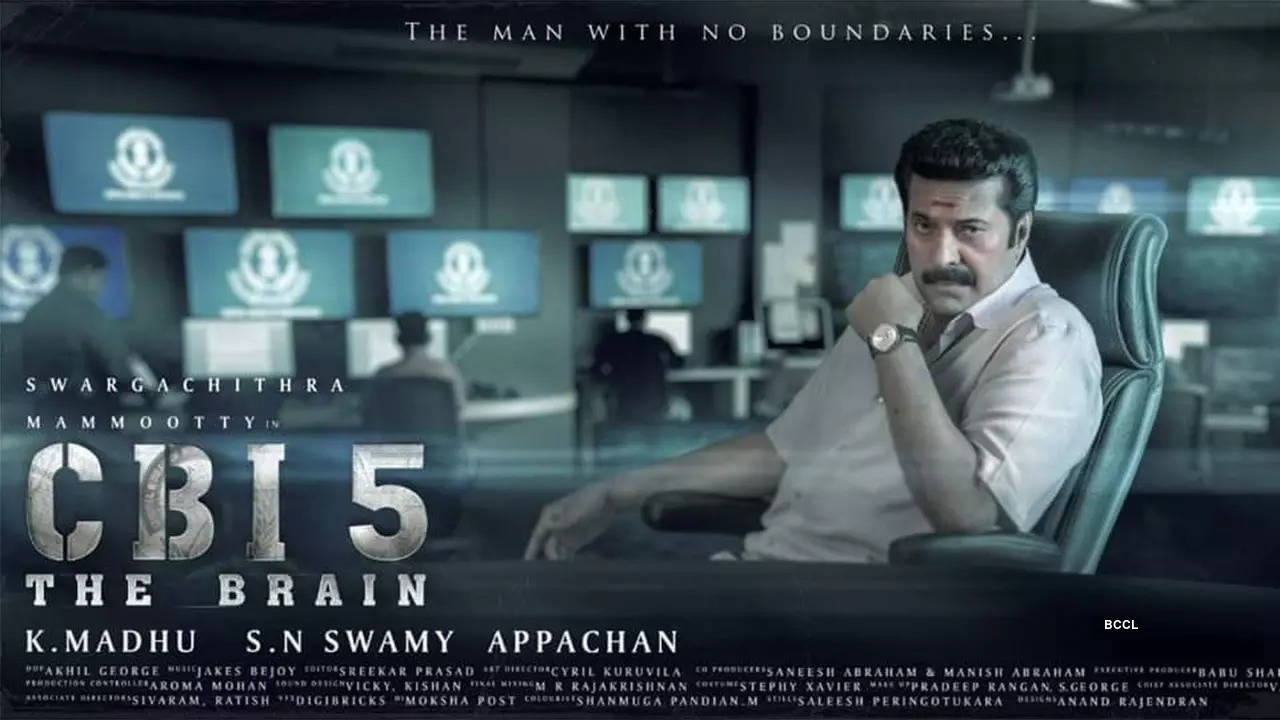

CBI 5: The Brain UA

Would you like to review this movie?

Cast & Crew

CBI 5: The Brain Movie Review : A tantalising and twisty crime thriller

- Times Of India

CBI 5: The Brain - Official Trailer

CBI 5: The Brain - Official Teaser

Users' Reviews

Refrain from posting comments that are obscene, defamatory or inflammatory, and do not indulge in personal attacks, name calling or inciting hatred against any community. Help us delete comments that do not follow these guidelines by marking them offensive . Let's work together to keep the conversation civil.

Arpitha S 155 486 days ago

It is actually Vinay in the 2nd para not sharath

Pradeep D 555 days ago

നിങ്ങളുടെ അവലോകനം ഇവിടെ എഴുതുക...(ഓപ്ഷണൽ)

nihalnazz 661 days ago

Guest 728 days ago.

very good movie

Alok 81604 755 days ago

Fantastic movie with a very interesting story and some amazing ideas.

Visual Stories

Reem Sameer’s top 15 glamorous looks

8 signs that your digestive system is compromised

Entertainment

Karishma Tanna's vacation pics will ignite your wanderlust craving

9 popular non-vegetarian dishes to try in Kerala

International Lynx Day: 10 amazing facts about these elusive jungle cats

10 decadent beverages you can make with chocolate

Anushka Shetty mesmerises with her stunning saree collection

Habits of successful leaders in the workplace

News - CBI 5: The Brain

Kaniha and her husband celebrate 14 years of togetherne...

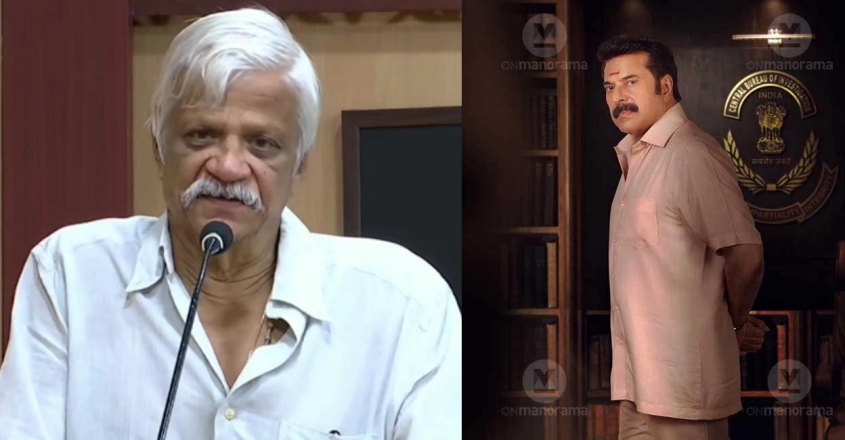

Writer NS Madhavan points out flaws in Mammootty’s ‘CBI...

Mammootty’s ‘CBI 5: The Brain’ gets an OTT release date...

‘CBI 5: The Brain’ Box Office Collection Day 9: Mammoot...

CBI 6: Is Mammootty and K Madhu planning for the next i...

‘CBI 5: The Brain’ Box Office Collection Day 1: Mammoot...

SUBSCRIBE NOW

Get reviews of the latest theatrical releases every week, right in your inbox every Friday.

Thanks for subscribing.

Please Click Here to subscribe other newsletters that may interest you, and you'll always find stories you want to read in your inbox.

Popular Movie Reviews

Gaganachari

Little Hearts

Varshangalkku Shesham

The Goat Life

Guruvayoorambala Nadayil

Sureshanteyum Sumalathayudeyum...

Marivillin Gopurangal

Log in or sign up for Rotten Tomatoes

Trouble logging in?

By continuing, you agree to the Privacy Policy and the Terms and Policies , and to receive email from the Fandango Media Brands .

By creating an account, you agree to the Privacy Policy and the Terms and Policies , and to receive email from Rotten Tomatoes and to receive email from the Fandango Media Brands .

By creating an account, you agree to the Privacy Policy and the Terms and Policies , and to receive email from Rotten Tomatoes.

Email not verified

Let's keep in touch.

Sign up for the Rotten Tomatoes newsletter to get weekly updates on:

- Upcoming Movies and TV shows

- Trivia & Rotten Tomatoes Podcast

- Media News + More

By clicking "Sign Me Up," you are agreeing to receive occasional emails and communications from Fandango Media (Fandango, Vudu, and Rotten Tomatoes) and consenting to Fandango's Privacy Policy and Terms and Policies . Please allow 10 business days for your account to reflect your preferences.

OK, got it!

Movies / TV

No results found.

- What's the Tomatometer®?

- Login/signup

Movies in theaters

- Opening this week

- Top box office

- Coming soon to theaters

- Certified fresh movies

Movies at home

- Fandango at Home

- Netflix streaming

- Prime Video

- Most popular streaming movies

- What to Watch New

Certified fresh picks

- Hit Man Link to Hit Man

- Am I OK? Link to Am I OK?

- Jim Henson Idea Man Link to Jim Henson Idea Man

New TV Tonight

- The Boys: Season 4

- Bridgerton: Season 3

- Presumed Innocent: Season 1

- The Lazarus Project: Season 2

- The Big Bakeover: Season 1

- How Music Got Free: Season 1

- Love Island: Season 6

Most Popular TV on RT

- Star Wars: The Acolyte: Season 1

- Eric: Season 1

- Ren Faire: Season 1

- House of the Dragon: Season 2

- Sweet Tooth: Season 3

- Dark Matter: Season 1

- Evil: Season 4

- Clipped: Season 1

- Fallout: Season 1

- Best TV Shows

- Most Popular TV

- TV & Streaming News

Certified fresh pick

- Star Wars: The Acolyte: Season 1 Link to Star Wars: The Acolyte: Season 1

- All-Time Lists

- Binge Guide

- Comics on TV

- Five Favorite Films

- Video Interviews

- Weekend Box Office

- Weekly Ketchup

- What to Watch

Best Movies of 2024: Best New Movies to Watch Now

30 Most Popular Movies Right Now: What to Watch In Theaters and Streaming

What to Watch: In Theaters and On Streaming

Weekend Box Office: Bad Boys Ride to $56.5 Million Debut

Movie Re-Release Calendar 2024: Your Guide to Movies Back In Theaters

- Trending on RT

- The Acolyte First Reviews

- Vote: 1999 Movie Showdown

- TV Premiere Dates

- House of the Dragon First Reviews

CBI 5: The Brain Reviews

Delightfully star-studded and twisty, the film is a mystery-laden treat to those who love the franchise and the genre. Just stay away from spoilers as smartly as you can!

Full Review | Original Score: 3.5/5 | May 4, 2022

What makes the film harder to hold on to the flatness in the staging. With so many scenes set inside the CBI office with officers discussing the case, a true crime podcast would have sufficed to tell the same story.

Full Review | May 4, 2022

CBI 5: The Brain

Where to Watch

Mammootty (Sethurama Iyer) Jagathy Sreekumar (Vikram) Mukesh (Chacko - CBI DySP) Anoop Menon (IGP K.C. Unnithan) Renji Panicker (Balagopal - CBI DySP) Saikumar (DySP Sathyadas) Soubin Shahir (Paul Meyjo) Asha Sharath (Adv. Prathibha Sathyadas) Sudev Nair (Sub-inspector Iqbal Hussain) G. Suresh Kumar (Abdul Samad - Home Minister)

A series of murders is happening in the city. With the police failing to solve the case, a team of CBI Officers under CBI officer Sethurama Iyer take up the investigation to resolve the mystery.

Recommendations

Advertisement

- WEB STORIES New

- ENTERTAINMENT

- CAREER & CAMPUS

- INFOGRAPHICS

- T20 World Cup 2024

- Manorama Online

- Manorama News TV

- ManoramaMAX

- Radio Mango

- Subscription

- Entertainment

CBI 5: The Brain starring Mammootty review: Sethurama Iyer does it again

The audience cheering the name of the production banner on the screen is a rare spectacle. That is exactly what happened when 'Swargachithra Productions' appeared on screen at the release of the movie 'CBI5: The brain'. The same happened when the famed BGM sounded in the hall. The theatre response to the opening of the movie evoked a nostalgic fervour and testified to how deeply the iconic series has been entrenched in the hearts of the Malayali audience.

Investigation stories are always thrilling. And when a cult series is headlined by the same iconic actors, the charm is manifold. Written by S N Swamy and directed by K Madhu, 'CBI 5: The Brain' sets the stage ready for the thunderous applause for the entry of Sethurama Iyer. And the brouhaha continued at every interval as each of the celebrated characters appeared. The laudatory hoots and claps when Jagathy Sreekumar appeared were deafening and reminded the magic the living legend once weaved on the screen.

The crimes are the same and entangled in the same mesh of politics-mafia-corrupt police nexus. The means to unravel the mystery are also the same. But what strikes the most is the flow of sequences arrayed engagingly. Another commendable part is that Mammootty as Sethurama Iyer is more poised, grounded and accessible than he was in his previous outings.

Mammootty, Parvathy starrer 'Puzhu': Expect the unexpected

There are deliberate attempts to degrade 'CBI 5': SN Swamy

The whole episode of the crime investigation is unveiled as part of a flashback at a training camp for a group of newly appointed IPS officers. The tale unwinds as one of the young officers' queries about a case, which the CBI had found tough to crack. Balagopal (Renji Panicker) narrates the case which was the hardest ever in its history and with the legendary Sethurama Iyer at the helm of the investigation team.

The case follows a trail of deaths some years back, which later turned out to be heinous murders, starting with that of a minister on a flight from Delhi to Kochi. The incident is followed by a series of deaths.

Though they all seemed natural deaths prima facie, the police smelt something fishy about them after detecting some connection in all the events. The police team led by DySp Sathyadas (Saikumar) nabbed a suspect in connection with the crime, the case tagged as 'basket killings' is eventually passed on to the CBI. And, naturally, Sethurama Iyer begins the game amid thunderous claps.

The differences between the Kerala Police and the CBI, the lack of proper evidence to nail the culprit, findings that leave no clue to the motive of the crimes baffle the investigation team and the viewers. The brilliance and charisma exuded by Sethurama Iyer ring in hope. However, the narrative is fraught with events and stories inside the stories rather than hurdles that challenge the top investigating officer and his team.

What displays the ingenuity of the top CBI cop is his ability to look "beyond" the seemingly possible conclusion of events and motives that are laid bare before the police. And the answer to why the movie is titled 'The Brain' is best read from the screen only.

Asha Sharath as advocate Prathibha, Soubin Shahir as Mansoor (Sandeep), Anoop Menon as IG Unnithan, Kaniha as Susan are characters who play crucial parts in the saga. Other actors, including Mukesh, Ansiba Hassan, Malavika Menon, Idavela Babu, Ramesh Pisharody, Prasanth Alexander, Kottayam Ramesh play prominent roles.

While Akhil George's camera closely follows the heat of moments, Jakes Bejoy's music, the remixed version of Shyam's original BGM track, has come of the age and gels well with the changing times.

A few misses in simple methods of evidence collection, some distracting indicators, and evidence, which are contradictory and are weak in logic, including the final one which nails the culprit may seem worrisome. But they fail to spoil the thrill of the roller-coaster ride. The movie in fact keeps a tight grip on viewers right from the start to finish. It is the brand of the franchise that outweighs the narrative and the premise. The makers are successful in creating yet another mystery thriller without losing the magnitude of the series and the novelty of the times.

- Movie Review

- Malayalam Cinema

'Golam' Movie Review: Ranjith Sajeev and Dileesh Pothan star in an intriguing whodunnit

A familiar, yet intriguing twist keeps this film on fatherhood afloat: Swakaryam Sambhavabahulam Review

Debutant Mubin M Rafi impresses in Nadirshah’s 'Once Upon a Time in Kochi' | Review

'Mandakini' Movie Review: A slow starter powered by the mass performance of female stars

'Thalavan': A well-crafted investigative thriller with strong performances from Biju Menon, Asif Ali | Movie Review

Mammootty's banter, Raj Shetty's villainy drive this imbalanced narrative | Turbo movie review

'Sureshinteyum Sumalathayudeyum...' may not be everyone's cup of tea, but it is enjoyable | Movie Review

'Guruvayoor Ambalanadayil': Prithviraj-Basil Joseph starrer stays entertaining despite predictable turns | Movie Review

'Marivillin Gopurangal': A smooth ride convincingly steered by Indrajith, Vincy Aloshious | Movie Review

- Firstpost Defence Summit

- Entertainment

- Web Stories

- Health Supplement

- First Sports

- Fast and Factual

- Between The Lines

- Firstpost America

CBI 5: The Brain movie review — Scrapes through by riding on nostalgia and Mammootty’s towering presence

Mammootty continues to imbue Iyer with dignity and gravitas, and ultimately, he is CBI 5’s saving grace.

)

Language: Malayalam

The first clue to where this film is headed lies in its grandiose title, CBI 5: The Brain . This is the fifth instalment of the popular CBI franchise that began with Oru CBI Diary Kurippu in 1988. Each one has been directed by K. Madhu, written by S.N. Swamy and starred Mammootty as CBI officer Sethurama Iyer, an icon of the agency and a genius. But why “the brain”? The choice of words is a harbinger of the film’s tendency to stuff ill-fitting English lines into conversations throughout the narrative, all of them stiffly written and awkwardly delivered.

CBI 5: The Brain begins with CBI officers Balagopal (Renji Panicker) and Vinay (Ramesh Pisharody) addressing a class of new IPS recruits. The lecture leads to a discussion about how Iyer solved a series of murders that came to be known as the Basket Killings in their time. Curious nickname, but again, inexplicable.

The mystery is initially interesting. A minister dies of a heart attack on a flight. This is followed by a crop of tragic deaths – an accident, a suicide, a hit-and-run incident. The CBI is called in to crack the case. Enter: Iyer.

Although a hero’s late entry is a cliché in commercial cinema to get the audience excited about the arrival of a superstar – usually male – on screen, and Mammootty’s directors have had a particular fondness for this device for a long time now, it can still be entertaining when well handled. In CBI 5 though, the effect is unremarkable.

Some thrillers pride themselves on challenging the viewer by being so oblique that they offer only fleeting glimpses of crucial pointers. In CBI 5: The Brain , the music, camera angles, acting and/or dialogues are used to over-explain every reason for suspicion, despite which we are left with some loose ends

Shyam’s background score for the early CBI films was sharp and energetic. Much water has flowed under the bridge and through Malayalam cinema’s music landscape since then, and in CBI 5: The Brain , Jakes Bejoy’s score feels almost generic, most notably the theme assigned to Soubin Shahir’s character. Yes, yes, we get it, he is meant to be an eerie fellow. How much will that point be underlined?

Oru CBI Diary Kurippu became a landmark because of the hero’s methodical approach to investigations, and conversations that sounded real, belying the pace and hectic activity on screen.

Instead of staying true to the original’s tone, CBI 5 throws bombastic and stilted lines into the mix in what appears to be a misplaced attempt at contemporisation.

This is ironic because a low pitch would have ended up echoing the middle-of-the-road slice-of-life new Malayalam New Wave that has been winning over audiences in Kerala for the past decade or so, and increasingly now outside Kerala too.

Sethurama Iyer’s trademark meticulousness remains, and for the first half, CBI 5 ’s plot offers a fair share of suspense. After a while though, it becomes too convoluted, too crowded with people and twists, and towards the end, those twists are too contrived to be convincing.

When the climactic revelation is made, the casualness towards mental health is off-putting. This is a far cry from the extreme sensitivity and awareness of mental well-being that Malayalam cinema has shown in recent years in a range of films including, perhaps most prominently, Kumbalangi Nights and #Home .

CBI 5 ’s impressively mounted opening credits may hold greater meaning for those who have been following the series, but even for those who have not, they serve as a reminder that this is a legacy film. The reliance on nostalgia works in the scene featuring Jagathy Sreekumar because it is poignant irrespective of whether you have watched the earlier films or know of the actor’s medical condition in recent years. It falls flat, though, in the jocular treatment of the recurring character Sathyadas (Saikumar). Unless you have seen the previous CBI instalments, the jestful tone even in his introductory scene is inexplicable. It has been 17 years since the fourth film was made, and a whole new generation of movie-goers are now visiting theatres, so it makes no sense to leave such a major portion of the film dependent on the public’s memory of Parts 1-4.

The cast of CBI 5: The Brain consists of many artistes with a solid track record, but they are either wasted here or left to struggle with inadequate writing. The Kerala State Award winner Swasika , for instance, is given a minor, under-explored role. So is Dileesh Pothan.

The effort to keep Soubin Shahir’s character intriguing and intimidating has the reverse effect – the screenplay is trying too hard with him, and so is the actor. To see Soubin misfire after so many back-to-back bull’s-eyes, including most recently in Bheeshma Parvam , is disappointing. Anoop Menon gets far more to chew on in the script, but is unable to handle it.

It can be exasperating to watch a film leaning too obviously on Mammootty’s larger-than-life personality to get by. Whatever CBI 5 ’s errors may be, thankfully it does not go the whole hog on this front with a surfeit of low-angle shots and a raucous signature tune or some tacky quirk for Sethurama Iyer set in the mould of The Great Father , White and some of the other terrible films that the legendary actor has chosen to star in in the past couple of decades. CBI 5 does let Mammootty dominate every scene in which he is present, but it holds back enough to allow the actor’s naturally towering presence to do its job. It is not as if Mammootty does anything new here, and the fact is, he falters with those oddly written English lines, but he is who he is, a giant who we have grown up loving, an actor who continues to imbue Iyer with dignity and gravitas, and ultimately, he is CBI 5 ’s’s saving grace.

Rating: 2.5 (out of 5 stars)

This review was first published when CBI 5: The Brain was released in theatres in May 2022. The film is now streaming on Netflix.

Anna M.M. Vetticad is an award-winning journalist and author of The Adventures of an Intrepid Film Critic. She specialises in the intersection of cinema with feminist and other socio-political concerns. Twitter: @annavetticad, Instagram: @annammvetticad, Facebook: AnnaMMVetticadOfficial

Read all the Latest News , Trending News , Cricket News , Bollywood News , India News and Entertainment News here. Follow us on Facebook , Twitter and Instagram .

Find us on YouTube

Related Stories

)

'Bigg Boss' fame Nimrit Kaur passes on her role in 'Love Sex Aur Dhokha 2' due to explicit scenes: Report

)

Crew: Singer Badshah drops teaser of his collaboration with Diljit Dosanjh from Kareena Kapoor, Tabu, Kriti Sanon's comedy

)

Anant Ambani-Radhika Merchant's Pre-Wedding Festivities: Saif Ali Khan-Kareena Kapoor, Ranveer Singh-Deepika Padukone exude royalty for the 'Desi Romance' theme

)

WATCH: Shah Rukh Khan, Salman Khan, Aamir Khan dance on 'Naatu Naatu' at Anant Ambani-Radhika Merchant's pre-wedding festivities

)

- International

- Today’s Paper

- Join WhatsApp Channel

- Movie Reviews

- Tamil Cinema

- Telugu Cinema

CBI 5 The Brain Review: Mammootty effortlessly transforms into Sethurama Iyer in an intelligently woven script

Cbi 5 the brain review: malayalam superstar mammootty is effortless as sethurama iyer in this fifth installment of the beloved cbi franchise. makers have already hinted at a sixth possible film..

Ending all the speculations and rumors about the storyline, Mammootty ‘s Sethurama Iyer is back, and just like in the past, he is soft, subtle, sharp, and smooth when it comes to investigating a case from the scratch. CBI 5 The Brain, the fifth chapter in the CBI franchise, directed by K Madhu and written by SN Swami, has all the elements to satisfy a Sethurama Iyer fan, and thriller fans in general.

SN Swami deserves respect for coming up yet again with a script that keeps the viewers engaged till the end when Malayalam cinema is going deeper into the investigative thriller genre. Also, Mammootty for the fifth time, maintains the charm and calmness needed for the iconic character of Sethurama Iyer. A feat in itself.

CBI 5 begins through a flashback with Balagopal (Renji Panicker), a CBI officer narrating the story to a batch of IPS trainees about one of the most baffling cases where even the CBI was clueless at a point. The case starts with the death of a minister named Samad in a flight, which is followed by a series of murders — of people associated with the minister. That’s when Sethurama Iyer is assigned to investigate this high-profile case. There are many diversions, suspects, and subplots that naturally fit into the narrative starting from a terrorist link, to a personal grudge, corrupt police officers, extra marital affair and a criminal who uses cutting edge technology.

The most interesting part of the movie was the reference of assassinations that took place in the Gandhi family. Vikram, played by Jagathy Sreekumar, who was integral in the past CBI movies, was used with conviction, which proved to be another highlight of the feature. It was heartwarming to see Jagathy, the legendary actor of Malayalam cinema who is in a paralyzed state for years now after an unfortunate road accident, was used in the movie at a very crucial juncture with definite purpose.

Mammootty, as usual, was effortless as Sethurama Iyer. Some of his minimalistic expressions were enough to create impact in theaters. Sai Kumar also took off from where he had left in the third part of CBI, reminding one of late actor Sukumaran’s mannerisms. Renji Panicker couldn’t a create connection like Mukesh’s character Chacko or Jagathy’s Vikram. His character was, at best, peripheral. Jagathy, though, played his part like a dream. Director deserves praise for the way he used the iconic comedian.

There are some undefined links in the storyline, especially the missing personal staff of the murdered minister and the terrorist link mentioned in the first part. The latter part of the second half seems like too much information is being packed in a very short frame of time; a hurried, half-hearted attempt to tie the loose ends, which can leave the audience groping in the dark.

But, for diehard fans, we have some good news. Makers have already hinted at a sixth movie of the beloved franchise.

CBI 5 The Brain movie cast: Mammootty, Mukesh, Jagathy Sreekumar, Saikumar, Renji Panicker, Dileesh Pothan, Malavika Menon, Shoubin Shahir

CBI 5 The Brain movie director: K Madhu

Can PM Modi turn a coalition to his advantage? Subscriber Only

MCQ-based examination isn't the right way to spot doctors Subscriber Only

UPSC Key | Agnipath scheme, neighbourhood first policy, constitutionalism, and Subscriber Only

How PCOS, ignorance of red flags landed this 29-year-old with Subscriber Only

Art and Culture with Devdutt Pattanaik | Skylines of Bharat Subscriber Only

On agriculture front, an agenda for the new government Subscriber Only

What we lose when we focus on identity politics Subscriber Only

‘Heatwaves will now become more frequent, durable and intense’ Subscriber Only

Tavleen Singh writes: We deserve better leaders Subscriber Only

In his first public remarks on the outcome of the elections in which the BJP fell short of a majority in Lok Sabha, RSS chief Mohan Bhagwat said Monday that a true sevak (one who serves the people) does not have “ahankar” (arrogance) and works without causing any hurt to others. Referring to the bitter poll campaign, he said “decorum was not maintained”.

More Entertainment

Best of Express

Jun 11: Latest News

- 01 Porsche crash case | ‘Strong possibility’ that parents destroyed original sample of blood: Cops to court

- 02 Oxford University to return stolen 500-year-old bronze idol to India

- 03 Apple WWDC 2024: From Lock Apps to redesigned Control Centre, new features coming to iOS 18

- 04 JEE Advanced Result 2024: Students ‘caught in a surprise’ as cutoff raised by 23 marks

- 05 ‘Bhatakta atma’ will haunt Prime Minister Modi forever, says Sharad Pawar

- Elections 2024

- Political Pulse

- Entertainment

- Movie Review

- Newsletters

- Web Stories

- T20 World Cup

- Express Shorts

- Mini Crossword

- Premium Stories

- Health & Wellness

- Program Guide

- Sports News

- Streaming Services

- Newsletters

- OTTplay Awards

- OTT Replay 2023

- Changemakers

Home » Review » CBI 5 The Brain movie review: Mammootty’s cerebral thriller is pacy and overloaded with red herrings »

CBI 5 The Brain movie review: Mammootty’s cerebral thriller is pacy and overloaded with red herrings

What works best though in the film is how despite Iyer’s genius in nailing down the culprits, he repeatedly fails to make a solid case out of it. The script allows room to show the perpetrators’ brilliance too, making them worthy opponents for the sleuth

- Sanjith Sidhardhan

Last Updated: 12.04 PM, May 01, 2022

Story: After a death of a minister leads to suspected murders of an activist, a doctor and a police officer, the family of the cop approaches the court for CBI to take over the investigation. Enter Sethurama Iyer and his team, but the investigation, which is later referred to as one of the toughest the agency had to face, takes them on a ride where they hit more dead ends than uncover new clues. Along with the probe, threats to the chief minister, non-cooperation stance from the police and personal agendas of several people who wanted the CBI to take over, further threaten to derail the case. How Sethurama Iyer, along with the help of his trusted aides – old and new – finally zero in on the ones behind the series of murders and reveal their motives form the plot.

Review: An important scene in the second half of the investigative thriller CBI 5: The Brain has a character asking its protagonist Sethurama Iyer (Mammootty) to come up with something cogent and sensible. This could have been the directive of its filmmaker K Madhu and Mammootty to its scriptwriter SN Swamy if they had to collaborate for their fifth films in the 34-year-old franchise. And the veteran writer delivers!

The movie kicks off with an induction function in the CBI office where a senior officer, played by Renji Panicker, talks to the new batch of a case that had confounded even the best in the agency. The case – which involved series of murders of a minister, a cop, a cardiologist and a whistleblower activist – was initially probed by the police with DySP Sathyadas (Saikumar), but only to be transferred to CBI. This is where Iyer and his team enter the fray. What awaits them is a non-cooperative stance of the police, further threats to the CM (Dileesh Pothan) and even family secrets of those who initially lobbied to have the agency take up the case.

It's in the first half where Swamy packs the movie with a ‘basketful’ of red herrings and stories, in a bid to show how challenging it is for the CBI team to chase a worthy lead. However, what it also does is make it hard to keep track, if you lose attention for a moment. There’s so much happening even before the protagonist Iyer comes, 40 minutes into the movie that is 2 hours and 42 minutes long. It does make the movie fast-paced but at the same time, if you have zoned out, you are missing out on key details. To a point it doesn’t matter because most of it is deliberate distraction from the actual clues that lead to the perpetrators, but where is the fun in only chasing the right tail.

Apart from the several characters and storylines that Swamy and (in the movie) Iyer disregard later, the first half also plays the iconic theme song too often; this wears off the vibe after a point. The second half though gets better – just like every other CBI film bar, maybe the fourth. Here is also where the film finds its footing and you see Iyer’s brilliance in full swing – explaining his theories, breaking them down, making ‘connections’ and showing his frustrations of not finding enough evidence – albeit in the most sedate manner. What works best though in the film is how despite Iyer’s genius in nailing down the culprits, he repeatedly fails to make a solid case out of it. The script allows room to show the perpetrators’ brilliance too, making them worthy opponents for the sleuth.

Mammootty is a treat to watch as Sethurama Iyer. Despite having first played the character in 1988, he doesn’t seem to have missed a beat while reprising it for the fifth and definitely not the final time, from what the story hints. The actor beautifully lends the calm and aura to Iyer, and overshadows his opponents and colleagues without trying to do that. Saikumar’s Sathyadas is abrasive as intended, and he gets full points for mimicking the late actor Sukumaran, who played Sathyadas’ father Devadas in the first two films. Though there’s nostalgia sprinkled every 15 minutes in the film, the scene featuring Jagathy Sreekumar as Vikram is beautifully written and acted. It’s not a sequence that is used just for nostalgia’s sake as it reveals vital clues that take forward Iyer’s investigation.

The movie also has several characters that come and go including Dileesh Pothan as the chief minister, Asha Sharath as a lawyer, Anoop Menon as police chief, Sudev Nair as a corrupt cop and Soubin Shahir as a suspect. How Swamy and Madhu have used these characters well is also proof of how much work has gone into the film that has a healthy balance between nostalgia and today’s style of films. Akhil George’s cinematography and Jakes Bejoy’s revamped theme music have a huge role to play in that.

Verdict: Sethurama Iyer’s fifth outing is a well-written investigative thriller that has the sleuth facing off against opponents that are as smart as him. While the film is overloaded with red herrings, once it narrows its focus and finds its footing, it’s an engaging watch to see Iyer use his genius, with able and delightful support of his previous aides, to uncover the truth and make his case.v

WHERE TO WATCH

- New OTT Releases

- Web Stories

- Streaming services

- Latest News

- Movies Releases

- Cookie Policy

- Shows Releases

- Terms of Use

- Privacy Policy

- Subscriber Agreement

CBI 5 Movie Review: Mammootty is stellar in a classier instalment with an insipid third act

Rating: ( 2.5 / 5).

It's been 34 years since we met Sethurama Iyer in Oru CBI Diary Kurippu and 17 years since the release of Nerariyan CBI , the fourth entry in Malayalam's longest-running movie series. Naturally, as someone who, as a kid, looked up to Mammootty's distinguished and brainy CBI sleuth, one is eager to see the next instalment no matter how long it took and no matter how intolerable the last two films were. (I never felt the urge to revisit Nerariyan CBI and Sethurama Iyer CBI .) I hoped for the 5th entry, titled CBI 5: The Brain , to bring back the classiness of the first two films, Oru CBI Diary Kurippu (OCDK) and Jagratha . And it does, although only to a certain extent.

Director: K Madhu

Cast: Mammootty, Renji Panicker Mukesh, Sai Kumar, Ramesh Pisharody

Yes, CBI 5 is the most refined in the series after Jagratha . And I say 'refined' because CBI 5 forgoes the lame humour, teleserial-style performances and overuse of the background score that had a corrosive effect on the last two films. I'm not implying that CBI 5 is entirely devoid of teleserial-style performances, but it is relatively a vast improvement, meaning I found it more tolerable. Perhaps I sound a tad enthusiastic here because several instances in CBI 5 instantly transported me to that time when I discovered OCDK and Jagratha on Doordarshan for the first time.

Perhaps it's Jakes Bejoy's background score which retains Shyam's iconic score without modifying it too much. Or maybe it has to do with me imagining myself watching CBI 5 as a kid during the 90s because it felt like watching something made two decades ago. I was okay with it because it brought back some lovely moviegoing memories. I liked how Mammootty's intro scene calls back to the same in OCDK . I liked how cleverly they included, and did justice to, Jagathy's character Vikram.

And despite its obsolete quality, SN Swamy's writing managed to sustain my interest at least until the moments leading up to the third act. There were areas in the film that made me marvel at his ability to conjure up some very knotty situations. The film starts well enough, and for a while there, you sense a touch of novelty in presenting the entire film as a flashback from the point-of-view of one of Sethurama Iyer's sub-ordinates (Renji Panicker) when prepping young officers during an orientation class. When you see him recounting a case that "even Sethu sir found to be the most difficult of his career", you expect to be blown away by the events, twists and resolution. Unfortunately, that's not the case.

Despite Mammootty's effortless way of making it seem as though he played Sethurama Iyer only yesterday, the film begins to go downhill after some of the storytelling deficiencies that plagued the last two entries begin to rear their ugly heads after the first 100 mins or so. One of these is Sai Kumar's extremely annoying character Sathyadas who has become more insufferable since his last appearance. This dinosaur of a character should have been omitted or written as a more mature character.

There is a lot of confusion caused by the complex connections between various characters, but the film expects you to be. It follows a confusion-clarity-confusion-clarity pattern. At one point, Renji Panicker echoes our sentiments when he tells Mammootty that he, too, is confused. When everything becomes clear to him, we are in the same state. But when Sethurama Iyer hits an unexpected snag, the confusion begins again. However, when the killer's identity, motive, and methods unravel eventually, one can't help but feel a sense of deja vu .

The much-hyped 'basket killing' proves to be a damp squib. And the killing method has shown up at least in two Malayalam thrillers before this, including a most recent one.

And it also doesn't help when the actors playing the culprits deliver unintentionally hilarious performances. One of these (miscast) actors has messed up two other thrillers before this. When Renji Panicker piqued our curiosity by talking about the 'seriousness' of the case with a grave expression on his face at the beginning of the film, I expected the villains to be more menacing than the ones we get. While the events leading up to that point is interesting, the ridiculous finale doesn't do justice to an investigator of Sethurama Iyer's calibre.

Let's remember that CBI 5 comes at a time when films like Drishyam 2, Antakshari , and Jana Gana Mana impressed us with more innovative plot twists. I recently heard a joke about how the murders in the last CBI films were caused by someone not wanting his illicit affairs coming out in the open, which is true when you think about it. CBI 5 brings up one such liaison, but, fortunately, SN Swamy doesn't make that the focal point of the case.

And one of the other things I found off-putting is Sethurama Iyer crediting the 'almighty' for guiding him to clues. It reminded me of that time when my parents tried to convince me to eat more vegetables in my childhood by telling me, "Sethurama Iyer is so intelligent because he is a vegetarian." God and vegetables! Insert Robert Downey Jr's eye-roll meme here.

NewsApp (Free)

CBI 5: The Brain Review

Mammootty should sign up for an OTT series because he's too charismatic to let this be our last memory of the CBI diaries, suggests Divya Nair. SPOILERS AHEAD.

After Drishyam 2 and Marakkar , CBI 5: The Brain was one of the most anticipated Malayalam films to release in recent times.

I was deeply disappointed when I missed watching the fifth installment of the successful series in a theatre. My father had watched it and was thrilled.

In fact, each time he raved about it, I had a tough time telling him not to reveal the plot or twist.

When the film finally released on Netflix, I watched it first day first show in the comfort of my home.

Directed by K Madhu and written by S N Swamy, CBI 5: The Brain begins with CBI officer Balagopal (Renji Panicker) hosting a seminar for the new batch of IPS officers where he narrates an unusual but interesting murder mystery solved by the CBI team under the leadership of the brainy Sethurama Iyer (Mammootty).

The film begins with the state's home minister succumbing to a cardiac arrest on a flight from Delhi to Kochi.

Although no foul play is established, two other people, including the minister's doctor and a journalist who suspects a link, are also found dead in mysterious circumstances.

When former CBI officer Josemon is killed in an accident, the case is handed to the CBI after the police fail to nab the culprits.

When Mammootty marks his entrance on screen with the trademark CBI background score, I had goosebumps.

Enter Mukesh as Chacko and as part of the investigation they bump into their old nemesis Sathyadas (Saikumar) taking you on a short nostalgia trip of the '90s.

When they finally meet Vikram, (an ailing Jagathy Sreekumarshown as a retired CBI officer in a guest appearance) the CBI trio somehow feels complete.

By now, the makers have spent a good 30 minutes or more trying to establish the importance of its key characters and create some curiosity about the killings. But now what?

The chase leads to a set of useful and unimportant links which drag the film to another hour or so.

Frankly, I am tired, annoyed and want to fast forward to the mastermind, if there is one.

The mastermind is only revealed in the last 15 minutes.

But was it worth all the drama and chase?

C'mon, we are in 2022, where teenagers have killed themselves, hoping to win an online bounty. So discussing a 2012 case in which a man with many aliases hacked a pacemaker to kill someone seemed too vain for me.

The plot, I am sorry to say, failed to do justice for its characters.

Maybe people in the audience, like my father, got sentimental watching their favourite stars on screen.

He told me how people whistled and stood up watching Jagathy simply smile and nod. ' Verum dummy, but... ( simply, a dummy ...)' he'd told me and I believed him.

Like in the earlier series, the dummy my father mentioned (Jagathy) helps crack the case.

In hindsight, I feel, Mammootty should sign up for an OTT series because he's too charismatic to let this be our last memory of the CBI diaries.

As for me, I am just super glad I didn't spend big bucks to watch it in a theatre.

- YOUR OTT GUIDE

More like this

Salute review, puzhu review.

Bollywood News | Current Bollywood News | Indian News | India Cricket Score | Business News India

- Cannes 2024

- In-Depth Stories

- Web Stories

- Oscars 2024

- FC Wrap 2023

- Film Festivals

- FC Adda 2023

- Companion Zone

- Best Indian Films Forever List

- FC Front Row

- FC Disruptors

- Mental Health & Wellness

CBI 5 Movie Review: A Few Good Nostalgia-Powered Moments In An Otherwise Underwhelming Investigation

Cast: Mammootty, Jagathy Sreekumar, Mukesh, Sai Kumar, Soubin Shahir, Dileesh Pothan, Sudev Nair

Director: K. Madhu

Back in 2004, when the CBI Series' third instalment released after a gap of 15 years, the makers may have felt the need to reintroduce Mammootty's legendary character to a new audience. As they say, a new generation of film viewers are born every decade and you understand the logic of handholding them through the intellect of Sethurama Iyer. So we got a mini investigation that doubled up as the character's big hero introduction. In a mass movie, this would mean a capsule action block, usually dished out to an inconsequential villain right before the intro song. But because this series was always about The Brain , the intro needed to show you just how smart he was even when he's dealing with the most everyday crime. I don't exactly remember the entire sequence but there was this brilliance in the way Sethurama Iyer used a stick to solve the mystery of stolen money from a temple donation box. Within the first five minutes, you knew everything you needed to know even if this was your first CBI movie.

Fast forward to 2022 and there's a lot that has changed for the new Mammootty fan. Internet and memes have kept Sethurama Iyer relevant and there's no longer the need to tell you about the man and his big throbbing brain. What this has done is free writer SN Swamy to jump straight into a major case that is said to have challenged this mastermind. It's an interesting idea where all the hyping is being rationed out to one fascinating case rather than the superstar that solved it. It's also a cheeky way to remind the audience that the film they're about to watch requires complete attention, albeit without the hope of much service towards the fans.

Even so, it feels so energising to hear Jakes Bejoy's remastered version of Shyam's 'CBI Theme' playing over the opening credits. The character is the closest we will ever get to our Sherlock Holmes and you realise just how much nostalgia is riding on this franchise and the characters that inhabit them. Which is to say that it just hits different when you see Mukesh on screen as Chacko from this universe as opposed to his other characters. This is even more obvious in the way we feel a gush of emotions when the beloved Jagathy Sreekumar returns as Vikram to give us the film's best stretch. Even during his limited screentime, there's the feeling of home when he appears on screen as though he belongs there, always.

This speaks a lot about the investigation at its core when you come off remembering certain characters more than what they contribute to the screenplay. The main case file that gets reopened here is called the 'Basket Killings', a series of four to five murders that begins with the death of a minister aboard a flight from Delhi. And as with any previous CBI film, the first half here too is a dump of information to set up the crime scene(s), the people involved and a whole bunch of possible convicts.

Naturally, this also includes a fair share of red herrings with deceptively confusing close-ups that are designed to throw you off. Without lighter moments or relief, the writing here is clinical with one piece of information leading to the next. You understand the need for this approach because this case is being explained to a new crop of IPS trainees during their orientation. And because the case itself is being referred to in hindsight, there's really no room for anything else but the investigation itself.

But this also leads to a heady situation of the TMI. There's an information overload soon with too many things being revealed too quickly and all in the form of dialogues. Even the crimes are revealed plainly without leaving us with any lasting visuals and this runs the risk of draining any urgency to arrive at the criminals—one of the most important factors for us to stay invested.

What makes the film even harder to hold on to the flatness in the staging. With so many scenes set inside the CBI office with officers discussing the case with each other, it feels like a true-crime podcast would have sufficed to tell the same story.

Another factor that keeps the investigation at arms length is the way it never lets you feel like you have a chance at arriving at an answer. A great crime novel deceives you into thinking you're just one clue away from finding the murderer. But in CBI 5 the film keeps bringing up so much information that it feels like they're just shifting the goal post instead of building a solid defence. This is what I missed most because the earlier films always left you with the satisfying feeling of participating in the investigation yourself with your own theories and suggestions. But over here, the film keeps tying itself into so many knots that it doesn't matter who was behind the crimes.

Of course, it doesn't come as a surprise when you're not even close to guessing who did it because there's no way we could have known. Although there's a lot of posturing about how clever everyone is arrive at this, one doesn't really understand how talented the IPS students are if they've never heard of the final reveal until their orientation, despite how sensational it is made out to be.

It also doesn't help how the Internet has also made viewers more aware of the problematic images the film keeps flashing. Most of the members in Sethurama Iyer's core group of talented investigators are shown to be from dominant castes (the only Christian is shown to be a mole) and there's the repeated image of greyer characters eating meat as though their non vegetarianism contributes to their wickedness. And with so many characters getting introduced to get abandoned soon after, you never really understand where they stand in the larger scheme of things. It's as though they were there just to confuse and this could easily have been avoided when the case is being narrated to these trainees.

With these issues, CBI 5 is at best an underwhelming experience without the cleverness of its predecessors. In its effort to shock you with the final twist, it forgets that it should still matter to us when we get there.

Related Stories

- THE WEEK TV

- ENTERTAINMENT

- WEB STORIES

- JOBS & CAREER

- Home Home -->

- Review Review -->

- Movies Movies -->

‘CBI 5: The Brain’ review: A flawed, mildly engaging thriller

The movie also marks the much-awaited comeback of Jagathy Sreekumar

Mollywood's own Hercule Poirot is back. Four films and more than two decades later, Sethurama Iyer is as perceptive, measured and astute as ever, and the distinctive gait and mannerisms of the character are well in place when he arrives to solve another seemingly daunting sequence of crimes.

After the title credits that pay tribute to iconic moments in previous films in the series, the director-writer duo of K. Madhu and S.N. Swami introduces a case that baffled the CBI the most. When a minister dies under mysterious circumstances, and those connected to him, including a police officer who was probing one of the murders, get killed, the CBI steps in. The makers are aware that central agencies taking over a probe, even those that are barely controversial, are commonplace now, and so place the story safely in 2012.

Before the release of the movie, the term ‘basket killing’ has been all over social media, thanks to writer Swami. The writer had refused to divulge more about the term in connection with the movie, and consequently, there have been a few speculations about the plot. The term did pique the interest of aficionados of the genre. As Iyer and his team probes, to the tune of that iconic background score, the death of a cop, they meet with corrupt cops, misdirection and a mysterious assassin.

Over the course of the past two decades after the first movie in the series, Oru CBI Diary Kurippu (1988) , was released, investigation, as shown in cinema (and the scores of TV shows on several streaming platforms), has changed, for better. Sure, the brilliant investigator protagonist relies on his observational skills, insights and 'brain' to unravel the mystery, but technological developments which the audience is now familiar with have been equally resourceful to him/her. The director-writer duo has managed to keep pace with such advancements in setting up a plot device, and Iyer too, banks much on CCTV evidence and other advancements to zero in on his suspects. However, it is hard to overlook a few glaring plot holes, like a computer wizard carrying a highly advanced hacking software, in a pen drive, without encryption for the programme.

A frequent trope in the franchise has been sexual promiscuity and/or the attempts to conceal it and a resultant crime. The fifth instalment of the movie too stays faithful to this trope, and so at the centre of the proceedings is sexual jealousy, while infidelity forms a subplot.

There are a few twists and surprises to keep the movie mildly engaging, as bodies drop and new connections are unearthed. The director throws enough and more red herrings in your way, so every other character could be a suspect, or has an axe to grind. However, it is hard to overlook a few elementary things—that the method of murder shown in the movie might be inspired by an episode in the modern-day adaptation of Sherlock Holmes stories, Elementary .

When Iyer returns to screen after 17 years, his gait and mannerisms are intact, and this appears to be a cakewalk for Mammootty. However, the film-making style of a bygone era, including the close-up shots and dramatic dialogue delivery, too, is back and the new generation of moviegoers may find all these a tad unappealing.

The movie boasts a bevy of stars-- Soubin Shahir, Dileesh Pothan, Sudev Nair, Kaniha, Ansiba Hassan, Sai Kumar, Anoop Menon, Renji Panicker, Mukesh, Asha Sharath, and Ramesh Pisharody. However, there are hardly any characters that are well-developed or have an arc. Shahir fails to exude the charisma and menace that his character of a psychopathic computer wizard demands, and instead ends up being loud and tedious, without an ounce of nuance. The talented Sudev Nair is wasted in the role of a cop who barely has anything to contribute to the proceedings, while Sai Kumar as DySP Sathyadas is just a pissed off cop brought in for old time’s sake—there are a throwback sequences from earlier movies which have no bearing on the plot. Dileesh Pothan too has little to contribute.

The movie also marks the much-awaited comeback of Jagathy Sreekumar. His character, Vikram, is retired and wheelchair-bound, but, offers the deus ex machina in the few minutes that he is around. It is a delight to see the actor on screen after a long time, and the move might find favour with fans of the franchise.

If you are an Iyer fan and can overlook a few glaring inconsistencies and plot holes, the movie might engage you.

Movie: CBI 5: Brain

Directed by: K. Madhu

Starring: Mammootty, Soubin Shahir, Dileesh Pothan, Sudev Nair, Kaniha, Ansiba Hassan, Sai Kumar, Anoop Menon

Rating: 2/5

📣 The Week is now on Telegram. Click here to join our channel (@TheWeekmagazine) and stay updated with the latest headlines

Taken Away: An evocative memoir of a Tibetan monk set in tumultuous times

The friendly cousin

Poco F6: Solid performance for the price tag with reliable battery life

Editor's pick.

PM Modi's challenge in third term is to reorient his image to that of a consensus builder

How 4 Kashmiri fashion labels put the troubled valley on global style map

Stroke care: What India needs to do

Equity surge

- Let Me Explain

- Yen Endra Kelvi

- SUBSCRIBER ONLY

- Whats Your Ism?

- Pakka Politics

- NEWSLETTERS

CBI 5 review: Mammootty retains Sethurama Iyer’s traits, but can’t save film

Nostalgia for the beloved CBI movie series, if you have any, is likely to end with the opening credits of the fifth and latest in the series – CBI 5: The Brain . The joy of once again hearing the cherished theme music and snippets of famous lines from the earlier movies sprinkled across the titles puts you in a different mood, eager and expectant. But what follows is somewhat of a kick in the teeth. Even Mammootty, reprising the adored role of Sethurama Iyer, that invincible sleuth who never fails, can do little to save the film.

It is sad to write this about a series that began so very charmingly towards the end of the 80s, made by director K Madhu and scripted by SN Swamy. The duo produced three more in the intervening years before CBI 5 . While the first two remain the most popular in the series, the third and the fourth – coming in early 2000s – were welcomed spiritedly by admirers of the detective who walked to the catchy theme music with his hands held in a knot behind him, uttering Malayalam and English with a touch of Tamil, scratching his head to connect the dots and flashing a winning smile.

Mammootty retains all of that in CBI 5 , the traits that made Sethurama Iyer appealing to so many. But the script, by the same man who wrote the other movies in the series, lacks the quality of its predecessors. The crime revolves around a series of murders that begins with the death of a state minister on a plane, and for some reason called the Basket Killings. Renji Panicker, Ramesh Pisharody, Ansiba and Alexander Prasanth play other CBI officials who take part in the investigation with Sethurama Iyer.

Watch: Trailer of the film

Iyer’s usual sidekicks – Vikram and Chacko – have been reduced to smaller roles. Jagathy Sreekumar, the veteran actor who played Vikram, has been unwell following a serious accident in 2012. Mukesh, who played Chacko, has turned to politics. However, in credit to the script, Jagathy’s short scene is impactful and full of feeling. Sai Kumar, who plays a corrupt police officer in part 3, and was seen as the son of the antagonist cop (played by the late Sukumaran) in the earlier movies, also reprises his role. He still continues to imitate Sukumaran in his mannerism and speech, a needless affectation by an otherwise wonderful actor, which makes the character look a bit of a clown.

Read: 'Oru CBI Diary Kurippu': Why Mammootty's detective film is unsurpassed

However, it is the writing that is a bigger letdown. The investigation or the mystery barely manages to keep your interest, even when the script has stuck to the formula – jump from one suspect to another, find new witnesses, dig more, all to raise your curiosity. But your curiosity rises only to wonder when the ordeal will end.

Jarring music, dialogues rich with melodrama, long and tedious sequences that bring out the worst in good actors reduce the film to hardly an endurable watch. Perhaps with some very generous editing to chop off about three fourths of an hour and most of the background score – while retaining the theme music for a few occasions – the film might have worked a lot better.

It could also be that the team was trying to change with the changing times, bringing in technology to solve the crime, but it clearly didn’t help.

Disclaimer: This review was not paid for or commissioned by anyone associated with the series/film. TNM Editorial is independent of any business relationship the organisation may have with producers or any other members of its cast or crew.

- Documentary

- Trailer Breakdown

- DMT News APP

- Short Films

- Privacy Policy

‘CBI 5: The Brain’ Ending, Explained: Why Was I. G. Unnithan Caught? What Was Unnithan’s Motive?

Directed by K Madhu and written by S.N. Swamy, “CBI 5: The Brain” is a crime, mystery, and thriller movie that talks about a case that had the entire C.B.I. department puzzled. A movie about betrayals and murders, “CBI 5: The Brain” is an exciting Mallyali movie that snares the interest. Although the film is unnecessarily long, it does have its twists and turns and is a good watch. It was released on May 1st, 2022, in India and premiered on Netflix on June 13th, 2022.

‘CBI 5: The Brain’ Plotline

The story starts with a C.B.I. officer taking the class of an upcoming batch of I.P.S. officers. The officer from C.B.I. decides to teach them a lesson about the unexpected events they will face while working in the force and narrates a story of a case that had their minds boggled. He recounts the case with great detail and gives an account of the case of the basket killings. In this case, a lot of people were murdered, but the murders were ruled as deaths by natural causes. The students listened with rapt attention and also used it as a case study to get further into their futures.

Why Did D.Y.S.P. Sathyadas Help The CBI?

The case of the “Basket Killings” was a very baffling case for both the C.B.I. and the Kerala Police. It started with the death of Minister Samad, followed by the deaths of his doctor, Dr. Venu, a freelance journalist, Bhasuran, a police officer, Josemon, and a sand contractor, Sam. After the death of Minister Samad, autopsy reports had ruled it a natural death due to a heart attack. However, Bhasuran had raised suspicions about the death and suspected foul play. After the deaths of both the doctor and Bhasuran, the media started jumping on the case and had their doubts as well. The final straw was served with Josemon’s killing. The police of Kerala, led by D.Y.S.P. Sathyadas, had their hands full and decided to pursue a lead toward sand contractor, Sam. Sathyadas believed Sam was responsible for Josemon’s death. However, he made sure all the blame fell on Sam so that he could save his friend Subhash, another sand contractor.

Sathyadas had his fair share of dealing with bribes and was also a corrupted official. However, he detested the C.B.I. and abhorred how they took over all their interesting cases. He vanished the pieces of evidence and simply handed minute details of Josemon’s case to the C.B.I. when he transferred the case to them. Advocate Pratibha, Sathyadas’ wife, was the person who had shifted the case to the C.B.I. Sathyadas was first angry, and then adamant about not helping the C.B.I. and made away with the evidence.

The C.B.I. found out the real reason behind Pratibha’s decision. She was adamant about not letting them win the case and wanted to see them fail. Another reason behind this step was her affair with Sam. If her husband, Sathyadas, caught wind of the affair, there would be a huge argument at home. There was already a tiff between them due to her handing the case to the C.B.I.

After the C.B.I. made the discovery of her affair with Sam, they accused her of killing Josemon. However, Pratibha denied it with proof, because she was out of town when the incident happened. Although her affair had come to light, she still wanted the C.B.I. to lose. Sathyadas heard about the affair and realized that the C.B.I. had no new breakthroughs in the case. Therefore, he suggested they keep investigating the suspicious guesthouse that belonged to Sam, near Josemon’s murder location. He had a change of heart and provided Sethu with directions for the breakthrough.

I. G. Unnithan Caught, Why?

It started to take a turn for the worse for Unnithan after Sethu and his team caught Paul Meyjo, who had many aliases. Meyjo was connected to all of the murders. The pen drive around his neck served as ground-breaking evidence. The first time they caught Meyjo, they had to let him go due to his strong alibis. They followed the piece of paper that Meyjo had left behind and connected it with the two passengers who had missed the flight Minister Samad was on when he took his last breath. They realized that Susan George, one of the passengers, was the individual whom Meyjo was paid to kill.

Meyjo was a genius, although he used his genius for all the wrong reasons. He developed software that could hack into the pacemakers and stop the heart while making it seem like a natural death. He went to complete his mission and traced Susan. He had started to hack into her pacemaker, fitted by Dr. Venu, and was about to finish her. However, the C.B.I. arrived at the opportune moment, catching him in the act and making it impossible for him to escape. They finally heard the story behind the motive for killing Susan. Before this, the C.B.I. was utterly perplexed about the motive and the mastermind. However, after listening to Susan, they understood the motive and realized who the mastermind was but could not connect him to Paul Meyjo.

‘CBI 5: The Brain’ Ending Explained – The Motive Behind Unnithan’s Kills.

Susan’s ex-husband, I.G. Unnithan, had acquired a contract killer to kill her off after their divorce due to his paranoid tendencies and schizophrenia. He was very suspicious of her and in his twisted mind always doubted her loyalty. This made him torture and harass her due to his own paranoia, and after divorcing her, he wanted to kill her. He contacted Sam, his sand contractor, and met Paul Meyjo through him. He had denied knowing Paul Meyjo. However, the piece of paper he had torn and handed to Meyjo to make sure he didn’t miss his target again was his downfall. However, he had not mentioned anything about Susan to Paul due to which Minister Samad, who also had a pacemaker installed by Dr. Venu was killed. The others were simply dealt with because they came too close to the truth.

I.G. Unnithan had grown overconfident in how he had taken care of his trails. This overconfidence served as his downfall. He had helped the C.B.I. and was sure that the investigation would not lead back to him. He had forgotten to take care of the strong evidence that he thought was of no consequence. According to Freud, this behavior stems from the guilt of his criminal activities. He had the tendency to brag and was ecstatic that neither the police force nor the C.B.I. could trace the killings back to him. Some subconscious part of him wanted to get caught and had been working toward it. He had not planned for the murder he had committed; he simply wanted to kill his wife and was sure that it would not be traced back to him. However, the murders had started to take a toll on his conscience, and his subconscious screamed for help. The entire time he helped the C.B.I., unconsciously, he was pushing them toward his trail, begging them to help. Criminal psychology points to this behavior as a guilty conscience and unchecked mental issues that give rise to more illegal activities as a call for help. Instances of criminals bragging about their kills and robberies before they are apprehended are due to a pang of subconscious guilt. I.G. Unnithan, similarly, had forgotten to take care of the newspaper from which he had provided the torn page to Meyjo as a means to identify Susan.

This case served as an example to the new batch of I.P.S. officers to remain calm while solving cases that’d test their patience and resolve. They might come across unexpected betrayals that might crush their spirits, but they have to keep a calm mind and power through to finish their job and also help their conscience.

“CBI 5: The Brain” is a 2022 Indian Drama Thriller film directed by K Madhu.

- Crime Thriller

- Drama Thriller

- Streaming on Netflix

‘Interview With The Vampire’ Season 2 Episode 5 Recap & Ending Explained: Is Lestat Alive?

Real-life jacques in ‘becoming karl lagerfeld’ series: how did lagerfeld’s lover die, ‘the atypical family’ ending explained & finale recap: is gwi-ju dead or alive, ‘billy the kid’ recap of season 2 episode 6, more like this, ‘mayor of kingstown’ season 3 episode 2 recap & ending explained: is caspar dead, ‘becoming karl lagerfeld’ ending explained & finale recap: what pushes karl off the edge.

- Write For DMT

COPYRIGHT © DMT. All rights Reserved. All Images property of their respective owners.

- Visual Story

- Entertainment

- Life & Style

To enjoy additional benefits

CONNECT WITH US

‘CBI 5: The Brain’ movie review: Not a worthy sequel to a memorable series

Unfortunately, for the fifth film in the series, there is hardly anything else to add value apart from the nostalgia factor.

May 01, 2022 05:15 pm | Updated May 05, 2022 01:11 pm IST

A still from ‘CBI 5: The Brain.’

Writing a sequel for a popular film series with a history of more than three decades and many successes is no mean task. There is always the expectation to better the previous ones or at least match up to them. 17 years have passed since the last film in the CBI series was released. Since then, Malayalam cinema has changed unrecognisably. So have investigation thrillers. Yet, there is still a market for nostalgia, which is what the director-writer duo of K. Madhu and S.N. Swami aims to tap in CBI 5: The Brain .

But, nostalgia alone cannot do all the heavy lifting to make a movie appealing. Unfortunately, for the fifth film in the series, there is hardly anything else to add value. Even nostalgia gets milked to its limits, with that popular theme music that accompanies CBI officer Sethurama Iyer, getting repeated plays, even when he visits the Chief Minister.

The investigation this time revolves around a series of murders, which are termed 'Basket Killings', for reasons which are never explained in the movie. The victims include a minister, a police officer, a cardiologist and an activist, all of whom seem to be connected in some twisted ways. In between, one of the dead minister’s staff members go missing, the Chief Minister gets a threat mail and so much else happens, including several character introductions and possible explanations for the murders that it is hard to keep track.

CBI 5: The Brain

Director: k. madhu, cast: mammootty, jagathy sreekumar, saikumar, asha sharath.

The police investigation hits a roadblock as expected, and demands are raised for a CBI investigation. In comes Iyer (Mammootty) and his team. Yes, there is the obvious tracking of mobile records and flight data, but most of their findings are arrived at through endless back and forth conversations within the team, which get dreary after a point. One of the few bright spots is the sequence involving officer Vikram (Jagathy Sreekumar), which also yields a vital clue on the killer’s identity.

Even the staging of the killings, from a regular hit-and-run to a hanging, all of it in a lazy manner, gives one a hint of what lies ahead, not to mention the badly written opening scenes featuring an induction session for young police officers. Be it the script or the making style, everything harks back to the time when the last CBI film was made. It must have taken quite a lot of effort to ensure that the investigation part does not engage the audience at all, so much so that even a clue on the possible killer, which marks the interval point does not evoke much of a reaction in us.

But, much worse is in store, especially the part where Iyer unravels the mystery around the mastermind behind the murders. It is the kind of plot twist that has been so overused that people have ceased employing it nowadays. In the end, one wished for another investigation, to search for the 'brain' mentioned in the title, but which is missing in the film's script. Some sequels end up spoiling for us the memory of an entire series. CBI 5: The Brain is one of those.

CBI 5: The Brain is currently running in theatres

Related stories

Related topics.

Malayalam cinema / cinema / reviews

Top News Today

- Access 10 free stories every month

- Save stories to read later

- Access to comment on every story

- Sign-up/manage your newsletter subscriptions with a single click

- Get notified by email for early access to discounts & offers on our products

Terms & conditions | Institutional Subscriber

Comments have to be in English, and in full sentences. They cannot be abusive or personal. Please abide by our community guidelines for posting your comments.

We have migrated to a new commenting platform. If you are already a registered user of The Hindu and logged in, you may continue to engage with our articles. If you do not have an account please register and login to post comments. Users can access their older comments by logging into their accounts on Vuukle.

Movie Reviews

Tv/streaming, collections, great movies, chaz's journal, contributors.

Now streaming on:

If you're lucky enough to attend an early screening of John Krasinski's new film, "IF," you may be greeted with a short introduction by the writer/director, asserting that the film is expressly for all the "girl dads" out there. Having now seen it, that much is true: despite its family-friendly brief, "IF" is less for kids than for the adults of kids -- the girl dads, if you will -- who want something that feels a little more mature than " Minions " but doesn't scare the kids away. Far from it; it might just bore them to tears.

It's a bold shift for Krasinski, who's already transitioned from sitcom lead to successful director with the "Quiet Place" series, and yet, looking at the man himself, it makes perfect sense. This is the guy who started a little feel-good news show from his house during the pandemic (that he then sold to ViacomCBS for a presumed truckload of money), after all. He's the kind of all-American aw-shucks new dad who dipped his toe into the horror genre, and now wants to make a fun movie that his children can watch.

The results, such as they are, play out like a half-baked live-action adaptation of a Pixar picture, from the "Monsters, Inc"-like structure of the IF world and the dramedic coming-of-age tales of " Inside Out " and " Up ." The opening credits even evoke "Up," playing gauzy home movies of the rhythms of a playful, happy family—with Krasinski as the patriarch—ostensibly shot by a DV camera but which looks suspiciously like grainy, professional-grade film stock. When films use this kind of device, only one thing can come -- death. Not just once but twice: When we catch up with Krasinski's daughter, Bea ( Cailey Fleming ), she's still in mourning over the offscreen death of her mother some time ago, which is now compounded by her father staying at the hospital awaiting heart surgery. (We're never privy to the details: he just says he has a "broken heart," which is a nifty case study for the film's simple, cloying nature.) The trauma clearly eats away at her, despite Krasinski's quirked-up, obnoxious attempts to cheer her up in the hospital room.

In the meantime, Bea stays with her equally effervescent grandmother ( Fiona Shaw , one of the film's highlights) at her old, creaky apartment building. It's while there that she suddenly develops the ability to see people's imaginary friends (or IFs, as the film so proudly dubs them), and gets looped into an adventure involving her grandmother's downstairs neighbor, the cynical IF whisperer Calvin ( Ryan Reynolds ). You see, he's been running a kind of matchmaking service for IFs whose kids have stopped believing in them; once they do, you usually get put out to pasture in a kind of pastel retirement home. Bea, eager for something to do (and believe in), sets herself to the task of helping Calvin save the IFs by giving them someone to believe in them.

That's the loose framework upon which Krasinski's paper-thin script rests, one that gestures broadly at a kind of mechanical worldbuilding but soon throws its hands up in the air and greedily chases one heartstring after another. For a kid's adventure, it's surprisingly dour and sentimental, chucking laugh-out-loud jokes for a patient sense of melancholy. That may work well for the young dads in the audience, but it's gotta bore kids to tears.

Its early stretches see Krasinski using the suspenseful eye he developed during " A Quiet Place " to fascinating kid-horror effect: Janusz Kaminski shoots the winding staircase of grandma's apartment like it's the Overlook Hotel, and one early spooky moment shows us a kid's-eye view of how creepy a strange old woman leering at you in the hallway can be. There's something of Guillermo del Toro's more sentimental work in some of these moments, building a world where imagination can be just as much a threat as comfort.

But then we get to the IFs and their dilemma, where most of "IF" loses its steam. The creatures themselves are hardly much to write home about: they take whatever form their kids conceived, from fire-breathing dragons to walking, talking, self-roasting marshmallows, all voiced by a murderer's row of "that guy" guest voices that'll leave you reaching for your phone to pull up IMDb right after.

Sure, they're technically impressive to look at, but they're bereft of character or whimsy. That's especially true for the film's central IF, Blue ( Steve Carell ), a purple, snaggle-toothed furball resembling the Grimace as subjected to years of British dentistry. Rather than play him with any kind of arched eyebrow, Carell gives a surprisingly workmanlike performance, a right shame given the verbal dexterity that lets him own wild animated characters like Gru.

The human cast fares little better, especially Reynolds, who coasts through this thing with the half-hearted zeal of someone sick of repeating the same Deadpool schtick. It almost feels redundant to cast him here since he functions as a kind of stand-in for Krasinski as the "fun dad" he's always wanted to be; instead, Calvin exists primarily as a smarmy sidekick, a fellow cynic who nonetheless helps the IFs on their mission. Then there's Fleming herself, a waifish young girl who rises to the occasion in a few Big Moments near the end but who largely gets little to do besides pout and absorb information.

The mechanics of the IFs also beggar belief and change on a dime depending on which lazy heartstring Krasinski wants to pull next. The script can't seem to decide how they really work: Do they disappear once forgotten about, or are they put in a home? Is the plan to rehome them to new kids, or get their now-grown adult companions to believe in them again? What's the plan from there? All immaterial questions for the presumed kiddie audience, but it's easy to get lost in the shoddy mechanics of the thing when the product as is is this listless and humorless. By the end, you get the distinct feeling that all of this sturm und drang is in service to stakes that, all told, are exceedingly minimal.

Occasionally, Krasinski lands on a neat idea or a perfect scene: A kaleidoscopic chase through an IF retirement home that Bea is changing with her imagination (complete with Busby Berkeley riffs and Reynolds climbing through an oil painting); Shaw's character remembering her love of ballet while her former IF ( Phoebe Waller-Bridge ) dances alongside just out of sight. But for every one of these, we get another tired scene with half-hearted performers rotely asserting the plot, or trotting out cloying platitudes like "The most important stories we tell are the ones we tell ourselves." That's to say nothing of the film's musical choices, the last of which is so on-the-nose, so egregious, that Wes Anderson should sue for plagiarism.

"IF" is a well-intentioned misfire—a kid's movie without laughs and a parent's movie without purpose. I sure hope Krasinski had a ball making it; it seemed like a welcome balm after the stressors of doing two horror pictures. But now, it's time to put away childish things.

Clint Worthington

Clint Worthington is a Chicago-based film/TV critic and podcaster. He is the founder and editor-in-chief of The Spool , as well as a Senior Staff Writer for Consequence . He is also a member of the Chicago Film Critics Association and Critics Choice Association. You can also find his byline at RogerEbert.com, Vulture, The Companion, FOX Digital, and elsewhere.

Now playing

Robot Dreams

Brian tallerico.

Kaiya Shunyata

Evil Does Not Exist

Glenn kenny.

The Contestant

Monica castillo.

Matt Zoller Seitz

Back to Black