How to Change the Powerpoint Default Presentation Screen

Is there a particular view in Powerpoint that you like to use, and you’re tired of having to switch to it anytime you open a presentation or create a new one? If so, then knowing how to change the Powerpoint default presentation screen is something that could improve the way you use Microsoft’s presentation software.

Fortunately, Powerpoint for Office 365 has a setting that lets you specify which view should be used whenever you open a presentation in the program.

Our article below will show you where to find this setting so that you can select your preferred view from a handful of options. This includes options like various Normal view configurations, Outline, Slide Sorter, and more. This is a really handy setting for people that don’t like the default view and want to use one of the many other possibilities within Powerpoint.

How to Change the Default Display Setting in Powerpoint for Office 365

- Launch Powerpoint.

- Click File .

- Select Options .

- Choose Advanced .

- Click Open all documents using this view , then select one.

Our guide continues below with additional information on changing the display settings for your slide show in the Powerpoint window, including pictures of these steps.

How to Set a Default View in Powerpoint for Office 365 (guide with Pictures)

The steps in this article were performed in Microsoft Powerpoint for Office 365. By adjusting this setting you will be affecting a default setting within the application. Once you make this change, all future existing and new slideshows will open using the view that you have specified.

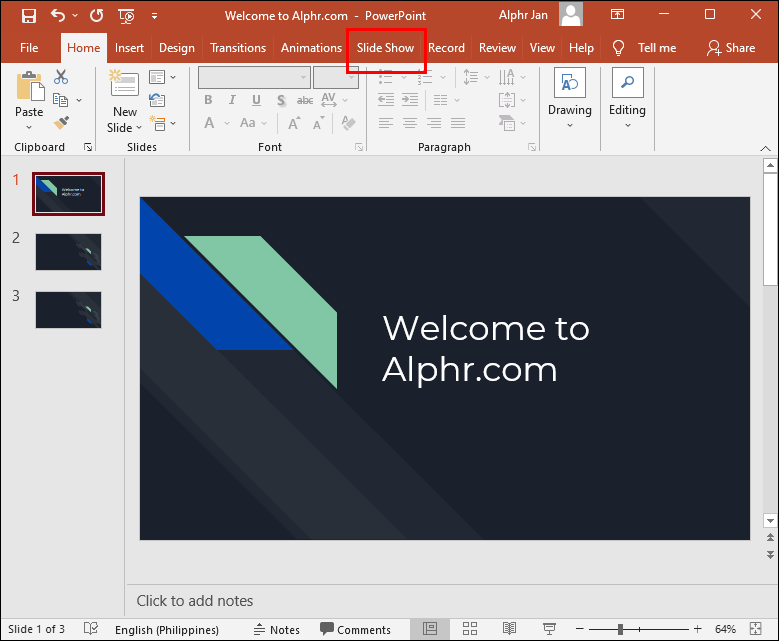

Step 1: Open Powerpoint.

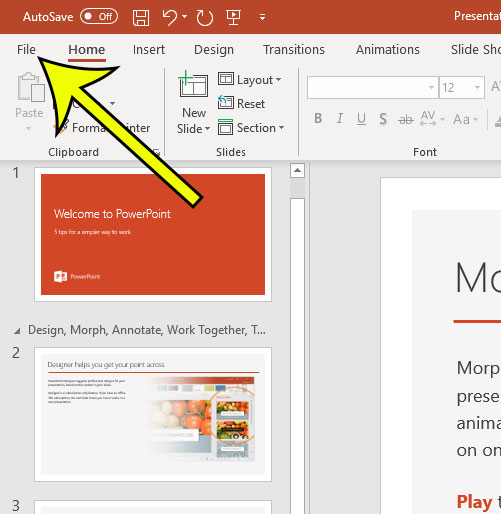

Step 2: choose the file tab at the top-left of the window..

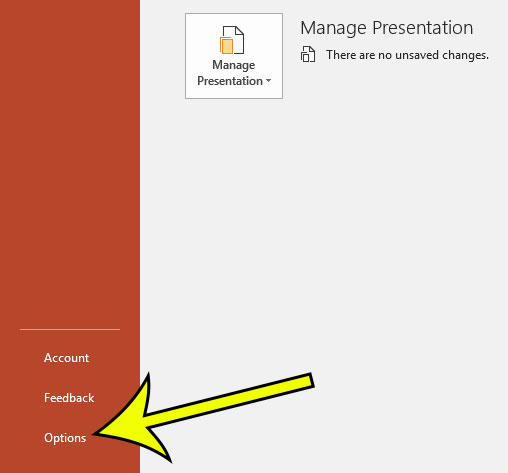

Step 3: Click the Options button at the bottom-left of the window.

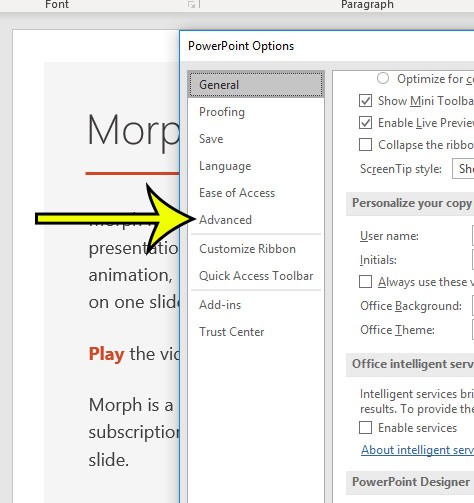

Step 4: Select the Advanced tab.

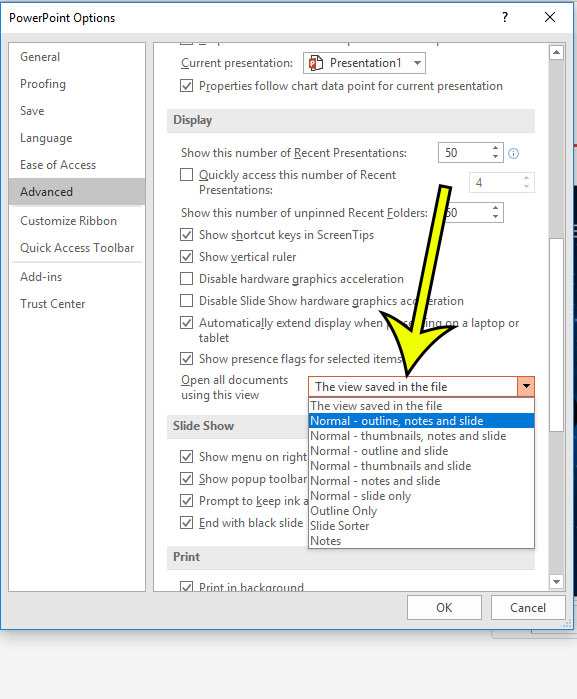

Step 5: Scroll down and click the dropdown menu to the right of Open all documents using this view , select the desired view, then click the OK button.

Our tutorial continues below with additional discussion about the Microsoft Powerpoint change default presentation screen process.

More Information on the Powerpoint – Change Default Monitor Steps

The options available for the default view selection are:

- The view saved in the file

- Normal – outline, notes and slide

- Normal – thumbnails, notes and slide

- Normal – outline and slide

- Normal – thumbnails and slide

- Normal – notes and slide

- Normal – slide only

- Outline Only

- Slide Sorter

Now if you close the current presentation and open another one, it will open with the view that you specified.

If you change this setting a few times and ultimately determine that you would rather go back to the original setting, then simply change the selection in step 5 above to The view saved in the file option.

If you are looking to learn how to change the Powerpoint default presentation screen because your computer has multiple monitors, then that is going to require a different set of steps that we discuss further below.

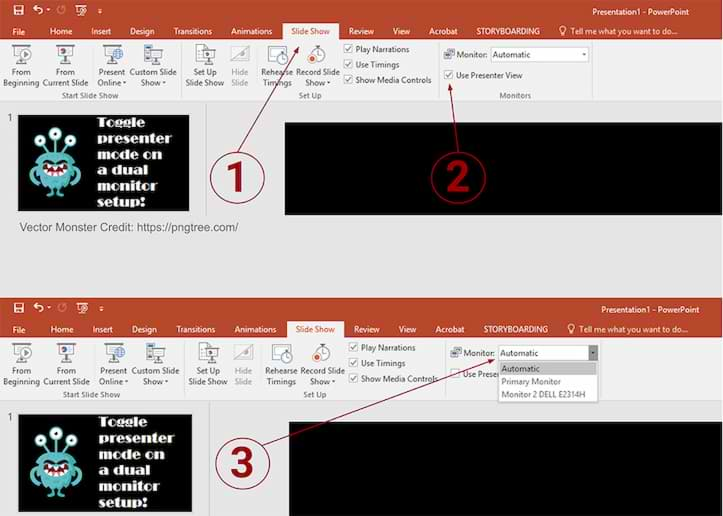

Note that the Slide Show tab has a number of other options concerning the display of your current theme.

- If you have multiple monitors connected to your computer, such as if you use two monitors normally and use one monitor for one application for one task and a second screen for something else, then you might want to specify that. If you click the drop down menu to the right of Monitor you can select screen options by either choosing Automatic, or one of the listed monitors.

- Under the monitor selection is a check box for Use Presenter View. If you enable that setting then you are going to enter a full screen mode for your presentation where you see the current slide, any speaker notes that you added, as well as a small preview of the next slide. if you turn off Presenter View then you are only going to see your presentations the same way that your audience sees them.

When you click the Design tab at the top of the window you are able to see a list of the available themes. if you click on one of those themes you can apply it to the current presentation.

However, by right-clicking on one of those themes you will see some additional options, including one to Set as Default Theme. Choosing that option will apply the selected theme as the default option for new slides that you create the next time you create a new presentation.

Would you like to disable all of the animations in a slideshow that you are working on? Find out where to make this change so that you can present and edit without any of the animations that exist in the presentation.

Kermit Matthews is a freelance writer based in Philadelphia, Pennsylvania with more than a decade of experience writing technology guides. He has a Bachelor’s and Master’s degree in Computer Science and has spent much of his professional career in IT management.

He specializes in writing content about iPhones, Android devices, Microsoft Office, and many other popular applications and devices.

Read his full bio here .

Share this:

- Click to share on Twitter (Opens in new window)

- Click to share on Facebook (Opens in new window)

- Click to email a link to a friend (Opens in new window)

- Click to share on LinkedIn (Opens in new window)

- Click to share on Reddit (Opens in new window)

- Click to share on Pinterest (Opens in new window)

- Click to share on Tumblr (Opens in new window)

Related posts:

- How to Duplicate a Slide on Google Slides

- How to Hide a Slide in Google Slides

- How to Delete a Slide on Google Slides

- How to Change Slide Dimensions in Google Slides

- How to Embed Video in Powerpoint 2013

- How to Remove Text Box in Powerpoint 2016

- How to Make a Square Picture a Circle in Powerpoint 2013

- How to Change Slide Orientation in Powerpoint 2013

- How to – Powerpoint Vertical Slide Setting in Powerpoint for Office 365

- How to Change the Default Save Location in Powerpoint 2010

- How to Hide a Slide in Powerpoint 2013

- How to Make a Picture Transparent in Powerpoint 2013

- How to Add a Formatted Slide to Your Slideshow in Powerpoint 2013

- How to Duplicate a Slide in Powerpoint 2016

- How to Number Slides in Google Slides

- How to Delete Slide Numbers in Powerpoint 2013

- How to Delete a Slide in Powerpoint 2013

- How to End With the Last Slide Instead of a Black Screen in Powerpoint 2013

- How to Add a Comment to a Slideshow in Powerpoint 2013

- How to Flip a Picture in Powerpoint 2013

- PC & Mobile

- Google Meet

How To Turn Off Presenter View in PowerPoint

Lee Stanton Lee Stanton is a versatile writer with a concentration on the software landscape, covering both mobile and desktop applications as well as online technologies. Read more February 3, 2022

Presenter view is a great tool to use when making presentations. It allows you to present slides professionally to the audience while keeping your talking points to yourself. However, there may be instances when you would prefer not to use the Presenter View feature. Maybe you are presenting on Zoom and need to share your screen with your audience. Perhaps you just find it simpler to teach your class without it.

Whatever your situation might be, this step-by-step guide will walk you through how to turn off Presenter View.

This article will look at how to turn off Presenter View in PowerPoint from various devices and platforms, including Teams and Zoom.

Turn Off Presenter View in PowerPoint for Windows

When working in PowerPoint on two different monitors (yours and the one for the audience), you will, in most instances, want to disable Presenter View from the audience screen. This will prevent them from seeing your talking points.

To do this, follow the steps outlined below:

Presenter View will now only be visible on your screen.

You can also turn off Presenter View for both screens by following the steps below:

Presenter View has now been disabled on both monitors.

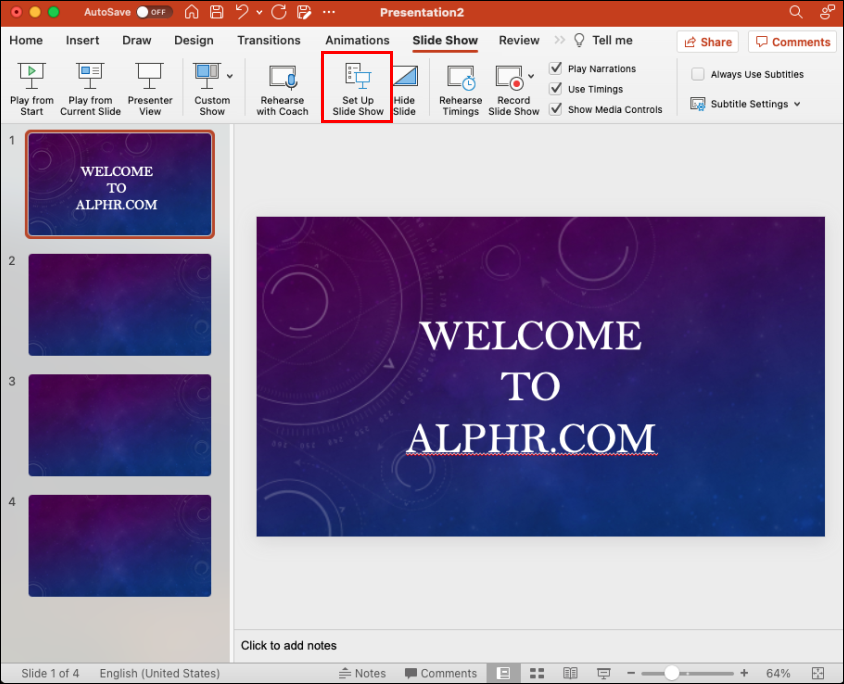

Turn Off Presenter View in PowerPoint for Mac

Things will work a little differently if you use a Mac, but don’t worry. We will guide you on how to turn off Presenter View PowerPoint on your Mac device.

- This will disable Presenter View and revert you to the mirrored slide display.

Turn Off Presenter View PowerPoint Zoom

Presenter View usually works best when using two different monitors; one for the presenter and another for the audience. That way, the talking points can only be viewed by one party. With more and more meetings taking place on Zoom, the dual-monitor approach can get tricky because the presenter shares their screen with the group. Let’s find out how to turn off Presenter View in Zoom.

Presenter View has now been turned off, and you can stop sharing your presentation and exit the slideshow. The screen sharing will stop, and Zoom will pop back up.

It’s important to remember to stop sharing your presentation before exiting PowerPoint. If you don’t, whatever was displayed on the presenter’s screen will be shown to the Zoom participants.

Turn Off Presenter View in PowerPoint Teams

Microsoft updated Teams and made Presenter View the default mode when sharing presentations. The feature is quite useful as it allows participants to move back and forth within slides without disrupting the presenter. They, however, did not provide a way to turn off Presenter View on this platform. If you are looking to disable the feature, there is a keyboard workaround that you can utilize for that purpose.

To turn off Presenter View PowerPoint in Teams:

Turn Off Presenter View in Google Meet

If you are holding your presentation on Google Meet, you have the option to share your entire screen, a window, or a tab. For Presenter View, you can opt to share one window with the audience while keeping a second window with your notes private.

To turn off Presenter View, all you need to do is close the window or tab that contains your speaker note. Do this by navigating to the bottom right corner of the page and clicking on “You are presenting,” then tap “Stop Presenting.” You will now have turned off Presenter View in Google Meet.

Turn Off Full Screen Presenter View in PowerPoint

Perhaps instead of turning off Presenter View, you would prefer to exit full-screen mode instead. This would allow you to have your speaker notes handy while still having access to your toolbar and other applications.

To do this, you would need to display Presenter View in a window instead of on the full screen. Here’s how to go about doing that:

Now PowerPoint will open in a window instead of full screen, and you will be better able to manage your Presenter View mode.

Additional FAQs

What do you do if presenter view is showing up on the wrong monitor.

Sometimes things might get mixed up, and your presentation notes appear on your audience screen. You can quickly fix this by:

1. Click on “Display Settings” on your PowerPoint screen.

2. At the top of the “Presenter Tools” page, select “Swap Presenter View and Slide Show.”

Turn Off Presenter View PowerPoint

PowerPoint’s Presenter View is an amazing feature that allows you to present without losing the option to refer to your notes. However, there may be instances where you would rather have the feature off. As we have seen, disabling Presenter View can be an easy process to navigate once you know where to look.

How often do you use Presenter View when delivering virtual presentations? Let us know in the comments section below.

Related Posts

Disclaimer: Some pages on this site may include an affiliate link. This does not effect our editorial in any way.

Lee Stanton May 31, 2023

Lee Stanton March 21, 2023

Lee Stanton March 7, 2023

Send To Someone

Missing device.

Please enable JavaScript to submit this form.

Get the Reddit app

A community dedicated to providing users of Microsoft Office PowerPoint tips, tricks, and insightful support.

Set the default monitor for the Presenter View window?

Is there a way to choose or control on which screen the Presenter View window appears? It's easy to assign a specific monitor to the actual presentation output, but I also want the Presenter View (with the slide preview and notes) to appear on a specific screen.

I have 3 screens, screen 2 is the primary screen for the Windows desktop and screen 3 is assigned to the presentation output. For some reason, the Presenter View window appears always on screen 1, but I want it to appear on screen 2 (the primary display). It's not a big issue because I can just drag the Presenter View window over (after exiting the full-screen mode), but it would be much easier if it appeared on screen 2 right away. I hope it's not hard-coded to the screen number because I can't change the physical screen assignment (screen 1 is a built-in laptop display).

PowerPoint: Presenter View on Dual Monitors

To disable the presenter view:

- Within PowerPoint, click the [Slide Show] tab.

- Locate the "Monitors" group > Uncheck "Use Presenter View."

- Within the "Monitors" group, click the "Monitor" dropdown menu > Select the specific monitor on which the slideshow should display. (The default option reads "Automatic.")

Share This Post

Search for: Search Button

PowerPoint presentations in a window not full screen

PowerPoint presentations don’t have to be full-screen, that’s the default and normal way to show a deck, but a window option is also there. A windowed presentation lets you display the slides in other software like virtual cameras or desktop capture.

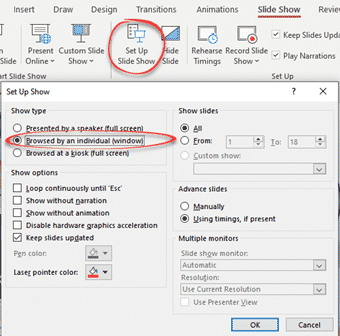

Go to Slide Show | Setup Slide show and choose ‘Browsed by an individual (window)’.

The options are the same in PowerPoint for Windows or Mac.

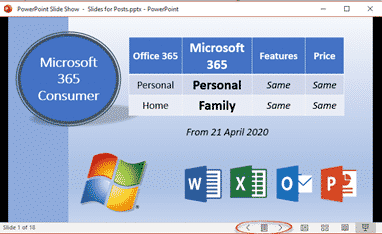

Start the slide show (Slide Show | From Beginning or From Current Slide) as usual except now it appears in a resizable window.

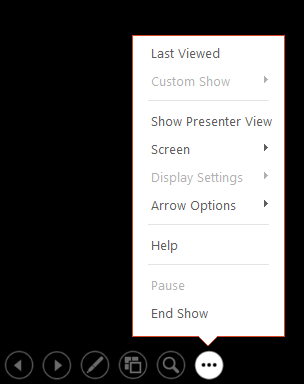

Windowed presentation controls

There are back and forward slide buttons on the bottom status bar (see above).

Click on the icon between those two buttons to see some more options.

The same options appear if you right-click in the presentation while windowed.

- Next / Previous

- Go to Slide – choose a slide from the flyout list.

- Go to Section – for decks in Sections

- Zoom In / Zoom Out

- Print Preview and Print

- Edit Slides

- Full Screen

It would be really nice if Presenter View could appear in a second window – but alas it’s not an option. That would let the use control the presentation properly while the slides appear in another window (which could be visible via a third-party tool).

Switching Full Screen and window slide show

Starting from a windowed presentation means you can switch between Full Screen and the window presentation without showing the entire PowerPoint menus etc. It’s a little neater and more professional.

Go to Full Screen from the menu option.

In Full Screen mode press Escape to return to the windowed presentation.

Why use a windowed PowerPoint presentation?

Having the slides in a resizable window gives you options not available when the deck is taking up the whole screen.

Perhaps you’re demonstrating some software? Have the presentation in one window and the software in another? See A better Side-by-Side document view for Windows and Mac to see how to use windows controls in Windows or Split View on a Mac.

A windowed presentation can be selected as an input option for a virtual camera or other service which lets you choose to display a selected running program. Full screen PowerPoint can’t be selected but the same slide can be chosen from a windowed presentation.

Blur and Virtual Background choices for any online meeting

Clever tricks with Zoom

Adding Virtual Background or blurred video to Teams

About this author

Office-Watch.com

Office 2021 - all you need to know . Facts & prices for the new Microsoft Office. Do you need it? Office LTSC is the enterprise licence version of Office 2021.

Office 2024 what's known so far plus educated guesses. Microsoft Office upcoming support end date checklist.

Latest from Office Watch

- Microsoft Designer has discovered landscape images

- WebP support available in Microsoft Office

- Notepad gets spell check and autocorrect

- Two better ways to Remove Background from images

- Two faster ways to insert an image from the web into Microsoft Office

- How the Background Removal Tool in Office really works.

- Insert the Spanish flag into Word, Excel or PowerPoint

- More free Copilot tricks for Microsoft Office users

- Change a picture to a custom color in Office

- Excel’s data from picture!

- Insert the English flag into Word, Excel or PowerPoint

- What to do about 30088-27 errors on Office install

- Clipchamp now for work accounts

- All about “Please check your network connection and try again” errors

- Starting off with Regular Expressions in Excel

- Why AI is not as great as the hype

- 12 better ways to move around a Word document

- Word Navigation Pane tricks and hidden options

- Using OpenDocument in Microsoft Office

- Get a truly private word processor from Proton

Stack Exchange Network

Stack Exchange network consists of 183 Q&A communities including Stack Overflow , the largest, most trusted online community for developers to learn, share their knowledge, and build their careers.

Q&A for work

Connect and share knowledge within a single location that is structured and easy to search.

Automatically open Powerpoint in presenter mode, on correct screens

We have a laptop that we only use to present an induction course which is just a powerpoint presentation.

We have a second screen hooked up and when the presentation opens up someone has to navigate to and hit the start presentation button, at that point and it opens in presenter view with the notes on the laptop and the presentation on the second screen.

What I'd like to be able to do is avoid that on element of interaction.

Is it possible to launch a power point directly into presentation mode with no additional user interaction?

- microsoft-powerpoint

3 Answers 3

Save the file in PowerPoint Show (*.ppsx) format . It will open automatically in presentation mode.

From Microsoft's site :

PowerPoint Show .ppsx A presentation that always opens in Slide Show view rather than in Normal view. Tip: To open this file format in Normal view so that you can edit the presentation, open PowerPoint. On the File menu, click Open, and then choose the file.

Note: If you need macros enabled save as a .ppsm. If you're in PowerPoint 2003 the older format you need is .pps.

- it opens the presentation on screen 2 correctly but you don't get the presenters view on screen 1. Can't see an option for configuring it... – Patrick Commented Jul 7, 2017 at 14:59

- 1 Hmmm. Seems you're right, there's no way to force visibility of the Presenter View in .ppsx files. That's annoying, and weird. So, my next method would be to save as a .pptm (macro-enabled .pptx) and use VBA to launch the slideshow, which would bring up Presenter View. But irritatingly, PowerPoint doesn't allow you to execute macros on open - so we need another workaround. You could download an auto_open PowerPoint add-in to give this functionality, or you could use something like a macro-enabled Excel file to call the .pptx on open. Bit painful but it can be done. – Andi Mohr Commented Jul 7, 2017 at 15:51

- Frustrating, so close and yet so far. I think I'll just leave an instruction on screen 'open powerpoint, click these two buttons' and be done with it. There is only so much you can automate away :) Thanks for you help. – Patrick Commented Jul 10, 2017 at 8:38

I achieved this by the following

I Added a macro to the Powerpoint presentation

Then start the powerpoint presentation from the command line with

I used a 2003 presentation in 2016 - so the extension for presentations containing macros is ppt not pptm.

The remaining issue I have is that when I close the presentation it prompts to save - it does not do this if I load and run it using the GUI.

I think I'm a bit late, but this might be helpful to others.

The best way I could find to start in presenter mode is by pressing Alt F5 . It will start from the first slide, though. If you want to start from the current slide, you might need to use the sequence Alt S C . But it doesn't work if you press each individually, they have to be pressed at the same time. Also, this second metho does a weird error sound and I couldn't figure out why.

Anyway, if you are ok about starting on the first slide, Alt S will do just fine.

Source: https://support.office.com/en-ie/article/use-keyboard-shortcuts-to-deliver-powerpoint-presentations-1524ffce-bd2a-45f4-9a7f-f18b992b93a0

- (1) The question says “with no additional user interaction”. It sounds like you’re just offering a different form of user interaction. (2) Or rather, three different forms. What’s the relationship between Alt+F5 and Alt+S? – Scott - Слава Україні Commented Jun 27, 2019 at 23:38

You must log in to answer this question.

Not the answer you're looking for browse other questions tagged microsoft-powerpoint ..

- Featured on Meta

- Announcing a change to the data-dump process

- Upcoming initiatives on Stack Overflow and across the Stack Exchange network...

- We spent a sprint addressing your requests — here’s how it went

Hot Network Questions

- Are missiles aircraft?

- Is the XOR of hashes a good hash function?

- Mass driver - reducing required length using loop?

- Can you find a real example of "time travel" caused by undefined behaviour?

- What sort of security does Docusign provide?

- Parking ticket for parking in a private lot reserved for customers of X, Y, and Z business's

- Wait, ASCII was 128 characters all along?

- Verbs for to punish

- Flights canceled by travel agency (kiwi) without my approval

- Are there any philosophers who clearly define the word "consciousness" in their arguments?

- The book where someone can serve a sentence in advance

- How could double damage be explained in-universe?

- smartctl lies that NVME has lifespan of ~2800TBW? What is the real lifespan of my NVME?

- Narcissist boss won't allow me to move on

- Impressions – a cryptic crossword

- How can I insert new arguments to this block of function calls that follow a pattern?

- The Zentralblatt asked me to review a worthless paper, what to do?

- A short story where all humans deliberately evacuate Earth to allow its ecology to recover

- How accurate does the ISS's velocity and altitude need to be to maintain orbit?

- Why not use computers to evaluate strength of players?

- How to recieve large files guaranteeing authenticity, integrity and sending time

- "A set of Guatemalas" in Forster's Maurice?

- Introducing a fixed number of random substitutions in a sequence

- Accelerometer readings not consistently increasing during movement

Support from FASE's Education Technology Office

- How can I view a PowerPoint show without using full screen?

Updated on Apr 27, 2020

This guide provides instructions on how to set your PowerPoint (for Windows) application to play your slide show in a window, not in full screen. This is particularly useful if you are participating in a video call and might want to see the presentation, your notes, and the webinar interface.

Please note: To display your presenter notes, you will need a two monitor set up.

How to display a slide show in a window:

- Select "Set up Slide Show" on the "Slide Show" tab

- Select the radio option, "Browsed by an individual (window)"

- Start your PowerPoint show

- To exit the show, use the "Normal" button to return to the file editing interface .

1. Select "Set up Slide Show" on the "Slide Show" tab.

- Navigate to the " Slide Show " tab.

- Select on " Set up Slide Show ."

2. Select the radio option, "Browsed by an individual (window)"

- Select "Browsed by an individual (window)" radio button option.

- Select on "OK" to save your changes.

3. Start your PowerPoint show (as per normal).

4. To exit the show, use the "Normal" button to return to the file editing interface.

Microsoft Office 365

- Login to your Microsoft Account

- Access your O365 Applications using the Microsoft Application Launcher

- How do I request a Microsoft Team?

- How Do I Get Support for Microsoft Teams?

- How do I schedule a webinar in MS Teams?

- How do I create an anonymous survey (form)?

- How do I collaborate with others on a form?

- How do I embed a Microsoft Office Form in a Quercus Page?

- How can I apply a template to an existing presentation?

- Set your presentation slide size to a widescreen (16:9) aspect ratio

- Can I record my PowerPoint presentation?

- How can I add an MS Form as an add-in to PowerPoint?

- How do I create automatic captions for my uploaded videos?

- How to download videos from Microsoft Stream (Classic)

- How to upload videos to Stream (on SharePoint)

- How to add a co-owner to your Microsoft Stream (Classic) videos

- How to embed a video hosted in Stream on SharePoint into Quercus

- How to create a playlist for Stream videos

- How do I remove the date and time from a OneNote notebook?

- How to create a shared, editable folder

Choose the right view for the task in PowerPoint

You can view your PowerPoint file in a variety of ways, depending on the task at hand. Some views are helpful when you're creating your presentation, and some are most helpful for delivering your presentation.

You can find the different PowerPoint view options on the View tab, as shown below.

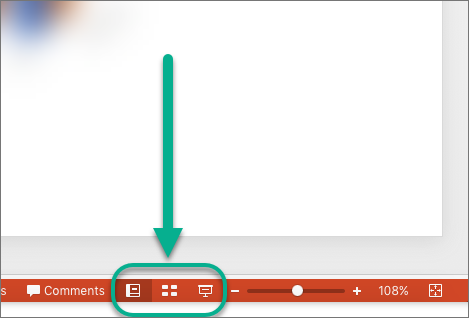

You can also find the most frequently used views on the task bar at the bottom right of the slide window, as shown below.

Note: To change the default view in PowerPoint, see Change the default view .

Views for creating your presentation

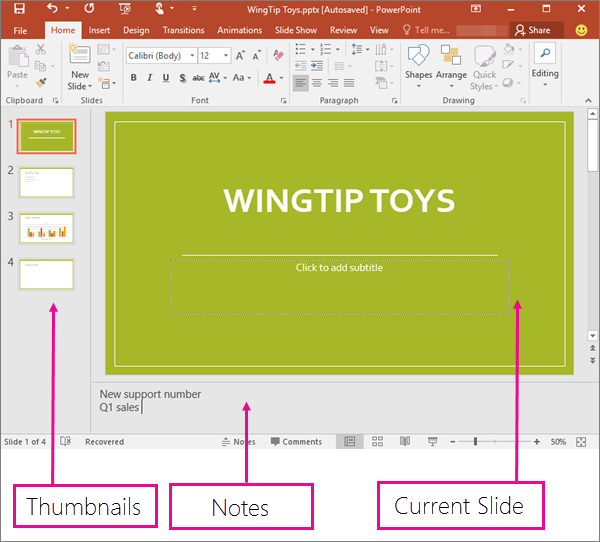

Normal view

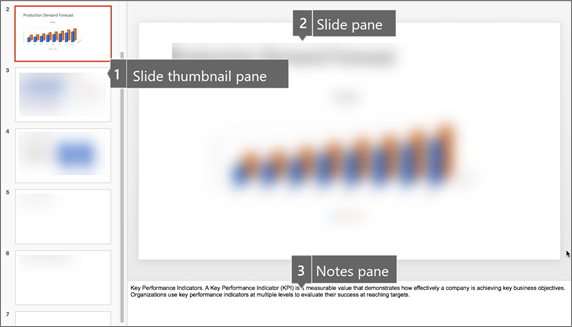

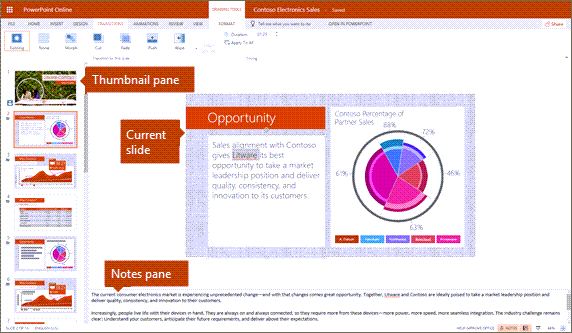

Normal view is the editing mode where you’ll work most frequently to create your slides. Below, Normal view displays slide thumbnails on the left, a large window showing the current slide, and a section below the current slide where you can type your speaker notes for that slide.

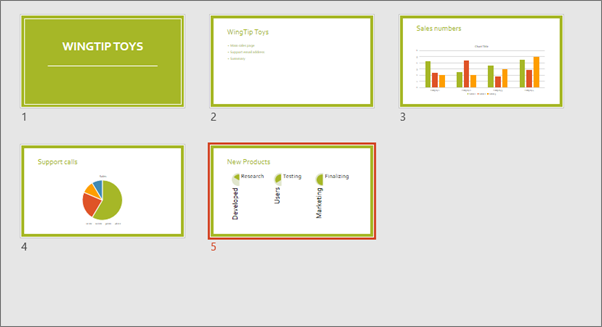

Slide Sorter view

Slide Sorter view (below) displays all the slides in your presentation in horizontally sequenced, thumbnails. Slide show view is helpful if you need to reorganize your slides—you can just click and drag your slides to a new location or add sections to organize your slides into meaningful groups.

For more information about sections, see Organize your PowerPoint slides into sections .

Notes Page view

The Notes pane is located beneath the slide window. You can print your notes or include the notes in a presentation that you send to the audience, or just use them as cues for yourself while you're presenting.

For more information about notes, see Add speaker notes to your slides .

Outline view

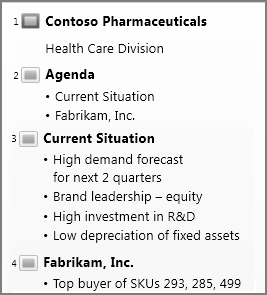

You can get to Outline view from the View tab on the ribbon. (In PowerPoint 2013 and later, you can no longer get to Outline view from Normal view. You have to get to it from the View tab.)

Use Outline view to create an outline or story board for your presentation. It displays only the text on your slides, not pictures or other graphical items.

Master views

To get to a master view, on the View tab, in the Master Views group, choose the master view that you want.

Master views include Slide , Handout , and Notes . The key benefit to working in a master view is that you can make universal style changes to every slide, notes page, or handout associated with your presentation.

For more information about working with masters, see:

What is a slide master?

Use multiple slide masters in one presentation

Change, delete, or hide headers and footers on slides, notes, and handouts

Views for delivering and viewing a presentation

Slide show view.

Use Slide Show view to deliver your presentation to your audience. Slide Show view occupies the full computer screen, exactly the way your presentation will look on a big screen when your audience sees it.

Presenter view

Use Presenter view to view your notes while delivering your presentation. In Presenter view, your audience cannot see your notes.

For more information about using Presenter view, see View your speaker notes as you deliver your slide show .

Reading view

Most people reviewing a PowerPoint presentation without a presenter will want to use Reading view. It displays the presentation in a full screen like Slide Show view, and it includes a few simple controls to make it easy to flip through the slides.

The views in PowerPoint that you can use to edit, print, and deliver your presentation are as follows:

Master views: Slide, Handout, and Notes

You can switch between PowerPoint views in two places:

Use the View menu to switch between any of the views

Access the three main views (Normal, Slide Sorter, or Slide Show) on the bottom bar of the PowerPoint window

Views for creating or editing your presentation

Several views in PowerPoint can help you create a professional presentation.

Normal view Normal view is the main editing view, where you write and design your presentations. Normal view has three working areas:

Thumbnail pane

Slides pane

Slide Sorter view Slide Sorter view gives you a view of your slides in thumbnail form. This view makes it easy for you to sort and organize the sequence of your slides as you create your presentation, and then also as you prepare your presentation for printing. You can add sections in Slide Sorter view as well, and sort slides into different categories or sections.

Notes Page view The Notes pane is located under the Slide pane. You can type notes that apply to the current slide. Later, you can print your notes and refer to them when you give your presentation. You can also print notes to give to your audience or include the notes in a presentation that you send to the audience or post on a Web page.

Outline view (Introduced in PowerPoint 2016 for Mac) Outline view displays your presentation as an outline made up of the titles and main text from each slide. Each title appears on the left side of the pane that contains the Outline view, along with a slide icon and slide number. Working in Outline view is particularly handy if you want to make global edits, get an overview of your presentation, change the sequence of bullets or slides, or apply formatting changes.

Master views The master views include Slide, Handout, and Notes view. They are the main slides that store information about the presentation, including background, theme colors, theme fonts, theme effects, placeholder sizes, and positions. The key benefit to working in a master view is that on the slide master, notes master, or handout master, you can make universal style changes to every slide, notes page, or handout associated with your presentation. For more information about working with masters, see Modify a slide master .

Views for delivering your presentation

Slide Show view Use Slide Show view to deliver your presentation to your audience. In this view, your slides occupy the full computer screen.

Presenter view Presenter view helps you manage your slides while you present by tracking how much time has elapsed, which slide is next, and displaying notes that only you can see (while also allowing you to take meeting notes as you present).

Views for preparing and printing your presentation

To help you save paper and ink, you'll want to prepare your print job before you print. PowerPoint provides views and settings to help you specify what you want to print (slides, handouts, or notes pages) and how you want those jobs to print (in color, grayscale, black and white, with frames, and more).

Slide Sorter view Slide Sorter view gives you a view of your slides in thumbnail form. This view makes it easy for you to sort and organize the sequence of your slides as you prepare to print your slides.

Print Preview Print Preview lets you specify settings for what you want to print—handouts, notes pages, and outline, or slides.

Organize your slides into sections

Print your slides and handouts

Start the presentation and see your notes in Presenter view

In PowerPoint for the web, when your file is stored on OneDrive, the default view is Reading view. When your file is stored on OneDrive for work or school or SharePoint in Microsoft 365, the default view is Editing view.

View for creating your presentation

Editing view.

You can get to Editing View from the View tab or from the task bar at the bottom of the slide window.

Editing View is the editing mode where you’ll work most frequently to create your slides. Below, Editing View displays slide thumbnails on the left, a large window showing the current slide, and a Notes pane below the current slide where you can type speaker notes for that slide.

The slide sorter lets you see your slides on the screen in a grid that makes it easy to reorganize them, or organize them into sections, just by dragging and dropping them where you want them.

To add a section right click the first slide of your new section and select Add Section . See Organize your PowerPoint slides into sections for more information.

Views for delivering or viewing a presentation

Use Slide Show view to deliver your presentation to your audience. Slide Show view occupies the full computer screen, exactly the way your presentation looks on a big screen when your audience sees it.

Note: Reading View isn't available for PowerPoint for the web files stored in OneDrive for work or school/SharePoint in Microsoft 365.

Most people reviewing a PowerPoint presentation without a presenter will want to use Reading view. It displays the presentation in a full screen like Slide Show view, and it includes a few simple controls to make it easy to flip through the slides. You can also view speaker notes in Reading View.

Need more help?

Want more options.

Explore subscription benefits, browse training courses, learn how to secure your device, and more.

Microsoft 365 subscription benefits

Microsoft 365 training

Microsoft security

Accessibility center

Communities help you ask and answer questions, give feedback, and hear from experts with rich knowledge.

Ask the Microsoft Community

Microsoft Tech Community

Windows Insiders

Microsoft 365 Insiders

Was this information helpful?

Thank you for your feedback.

COMMENTS

Deliver your presentation on two monitors. On the Slide Show tab, in the Set Up group, click Set Up Slide Show. In the Set Up Show dialog box, choose the options that you want, and then click OK. If you choose Automatic, PowerPoint will display speaker notes on the laptop monitor, if available. Otherwise, PowerPoint will display speaker notes ...

Open PowerPoint presentation. Click "Slide Show.". Click "Set Up Show.". Check the box "Show Presenter View" in the dialog box which opens. This opens a navigation panel on the presenter's monitor which allows the presenter to easily manage the multiple screens. Click the monitor you want the slide show presentation to appear on ...

Select the Use Presenter View checkbox. Select which monitor to display Presenter View on. Select From Beginning or press F5. In Presenter View, you can: See your current slide, next slide, and speaker notes. Select the arrows next to the slide number to go between slides. Select the pause button or reset button to pause or reset the slide ...

PowerPoint has a Monitor setup UI under Slide Show / Monitors. But it only allows the selection of the monitor to use for the Audience View and not the monitor used for the Presenter View. So I can get the audience view of the slide show to appear on the right monitor but the Presenter View is appearing on a monitor chosen by PowerPoint, not me ...

Launch Powerpoint. Click File. Select Options. Choose Advanced. Click Open all documents using this view, then select one. Click OK. Our guide continues below with additional information on changing the display settings for your slide show in the Powerpoint window, including pictures of these steps.

Start presenting. On the Slide Show tab, in the Start Slide Show group, select From Beginning. Now, if you are working with PowerPoint on a single monitor and you want to display Presenter view, in Slide Show view, on the control bar at the bottom left, select , and then Show Presenter View.

A few years ago PowerPoint introduced Presenter View Preview. This mode allows you to see Presenter View even if you only have one screen. It is a way to practice your presentation without having to connect to a projector. Using this mode can be helpful depending on the meeting platform you use.

Whenever I start a Powerpoint slideshow, the slideshow itself appears on monitor #1, and on monitor #2 I get a "presenter view". I can use the top-bar UI to switch between the two monitors (slideshow on #2, presenter view on #1) - that works fine. However, this setting doesn't persist.

I suggest you use an FHD screen or a lower resolution screen instead of a high-resolution screen for the slide show. Here is the section of my video that shows this tip. Use Presenter View windowed, not full screen. By default, Presenter View opens in full screen mode on one screen while the slides open full screen on the other screen.

The solution in Windows (for Mac see below the video) In PowerPoint, go to the Slide Show ribbon. In the Monitors section you set which monitor displays the Slide Show (not which monitor displays Presenter View). Use the drop-down list to select your external monitor. Now when you start the Slide Show, the slides will appear on the external ...

In PowerPoint, click on the "Slide Show" tab. Locate the "Monitor" group. Uncheck "Use Presenter View.". In the "Monitors" group, click on "Monitor" to display the dropdown ...

For the Office with version number is 16.0, such as Office 2019, you may go to Registry Editor, locate to HKEY_CURRENT_USER\SOFTWARE\Microsoft\Office\16.0\PowerPoint\Options, add a dword value, the value name "UseAutoMonSelection", the value data is 0, which is for Primary Monitor. But, if you change the Monintor in PowerPoint, value data would ...

And then you can use the DISPLAY SETTINGS and SWAP after launching the presentation if not on the right monitor. In my quick test, the proper monitor to select before launching was not intuitive; but if you experiment with the monitor selector drop-down menu; you should be able to achieve your goals. 1. Reply.

To disable the presenter view: Within PowerPoint, click the [Slide Show] tab. Locate the "Monitors" group > Uncheck "Use Presenter View." Within the "Monitors" group, click the "Monitor" dropdown menu > Select the specific monitor on which the slideshow should display. (The default option reads "Automatic.") Need to enable or disable presenter ...

Steps. Enter Mission Control (F3 button with the little rectangles). On the private display select the '+' symbol in the upper-right to create a new virtual desktop (let's call this the "PowerPoint desktop"). Select the icon of your original desktop (the one with Powerpoint open) to return to it.

Starting from a windowed presentation means you can switch between Full Screen and the window presentation without showing the entire PowerPoint menus etc. It's a little neater and more professional. Go to Full Screen from the menu option. In Full Screen mode press Escape to return to the windowed presentation.

If you prefer, however, you can specify that PowerPoint open in a different view, such as Slide Sorter view, Slide Show view, Notes Page view, and variations on Normal view. Click File > Options > Advanced. Under Display, in the Open all documents using this view list, select the view that you want to set as the new default, and then click OK.

With everybody on Teams now, Powerpoint presentations is a mayor painpoint at work. I have showed people how to go to "Set up slide show" and click the strangely worded "Browsed by an individual (windowed)". But you have to do this for every presentation. Is there a way to set this windowed presentation mode as default in Powerpoint?

It will open automatically in presentation mode. From Microsoft's site: PowerPoint Show .ppsx. A presentation that always opens in Slide Show view rather than in Normal view. Tip: To open this file format in Normal view so that you can edit the presentation, open PowerPoint. On the File menu, click Open, and then choose the file.

How to display a slide show in a window: Select "Set up Slide Show" on the "Slide Show" tab; Select the radio option, "Browsed by an individual (window)" Start your PowerPoint show; To exit the show, use the "Normal" button to return to the file editing interface.

Every time I start a powerpoint presentation, it starts on the wrong monitor. Is there a way to change the default monitor for powerpoint presentations in Powerpoint? I realize there is the "Display Settings" once the presentation has launched, but is there a way to configure it so it saves what monitor to display on? Note: I have 3 monitors

Views for creating your presentation Normal view. You can get to Normal view from the task bar at the bottom of the slide window, or from the View tab on the ribbon.. Normal view is the editing mode where you'll work most frequently to create your slides. Below, Normal view displays slide thumbnails on the left, a large window showing the current slide, and a section below the current slide ...

Report abuse. If you choose Slide Show>Set Up Slide Show and check Browsed by an individual (window), the presentation will appear in a moveable window instead of taking up the whole screen. Author of "OOXML Hacking - Unlocking Microsoft Office's Secrets", ebook now out. John Korchok, Production Manager. [email protected].